How can a language model expand its capabilities while maintaining nearly constant computational cost per token? The latest advancements in sparse large-scale models offer a compelling answer. The Ling 2.0 series exemplifies this by leveraging a design philosophy where every activated unit directly contributes to enhanced reasoning performance. This innovative approach enables scaling from tens of billions to trillions of parameters without proportionally increasing the compute required for each token processed. The series includes three variants: a 16-billion parameter model activating 1.4 billion parameters per token, a 100-billion parameter model with 6.1 billion active parameters, and a flagship trillion-parameter model activating approximately 50 billion parameters per token.

Core Architecture: Sparse Mixture of Experts (MoE)

At the heart of Ling 2.0 lies a consistent sparse Mixture of Experts (MoE) framework. Each transformer layer contains 256 specialized routed experts alongside a single shared expert. For every token, the routing mechanism selects 8 routed experts, while the shared expert is always engaged, resulting in roughly 9 active experts out of 257-about 3.5% activation, aligning with a 1/32 activation ratio. This sparse activation strategy yields approximately sevenfold efficiency compared to dense models of equivalent size, as only a fraction of the network is computed per token, yet the model benefits from a vast parameter space.

Innovations Across the Stack

Architectural Design: The Ling team employs what they term the “Ling Wind Tunnel,” a controlled set of small-scale MoE experiments trained under uniform data and routing protocols. These experiments are then extrapolated using power laws to predict performance metrics such as loss, activation patterns, and expert utilization at larger scales. This methodology enables cost-effective optimization of routing and model shape. The routing mechanism is designed without auxiliary loss, utilizing sigmoid scoring, while techniques like QK Normalization, MTP loss, and partial Rotary Positional Embeddings (RoPE) maintain stability across deep layers. This consistent design principle applies uniformly across the Ling mini 2.0, Ling flash 2.0, and Ling 1T models, ensuring scalability and coherence.

Pretraining Strategy: The models are pretrained on an extensive corpus exceeding 20 trillion tokens, beginning with a 4,000-token context window. The training data progressively emphasizes reasoning-intensive content such as mathematics and programming, which eventually constitutes nearly half of the dataset. A mid-training phase extends the context window to approximately 32,000 tokens on a curated 150-billion-token subset, followed by the injection of 600 billion tokens featuring high-quality chain-of-thought reasoning. The final stage stretches the context window to an impressive 128,000 tokens using the YaRN technique, all while preserving performance on shorter contexts. This comprehensive pipeline ensures that long-context reasoning capabilities are ingrained early in training rather than appended during fine-tuning.

Post-Training Alignment: The alignment process is bifurcated into capability enhancement and preference tuning. Initially, Decoupled Fine Tuning enables the model to alternate between rapid responses and in-depth reasoning based on system prompts. Subsequently, an evolutionary Chain-of-Thought (CoT) phase diversifies and enriches reasoning chains. Finally, sentence-level policy optimization using a Group Arena Reward mechanism fine-tunes outputs to align closely with human preferences. This multi-stage approach allows the base model to achieve strong performance in mathematics, coding, and instruction-following without unnecessarily inflating every response.

Infrastructure and Efficiency: Ling 2.0 models are trained natively using FP8 precision with robust safeguards, maintaining loss curves close to BF16 precision while improving hardware utilization by approximately 15%. More substantial speed gains-around 40%-stem from advanced heterogeneous pipeline parallelism, interleaved forward-backward execution, and partitioning strategies aware of the MTP block structure. Additionally, the Warmup Stable Merge technique replaces traditional learning rate decay by merging checkpoints, facilitating stable training at the trillion-parameter scale on existing hardware clusters.

Performance Insights

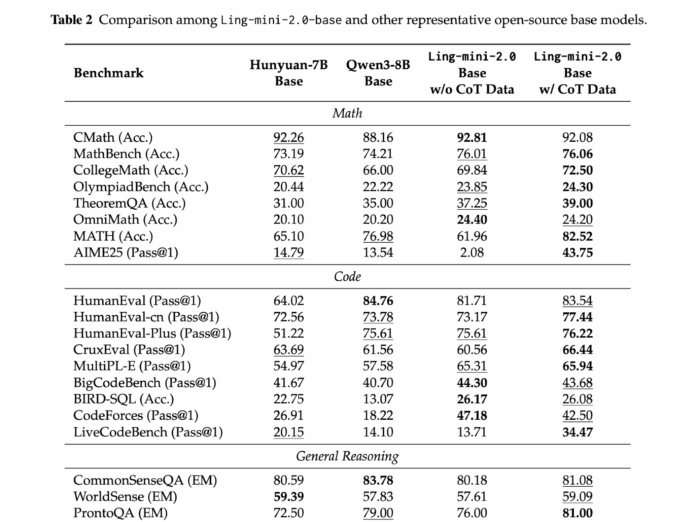

Across all model sizes, Ling 2.0 demonstrates competitive performance while keeping per-token computation low. The 16-billion parameter model activates 1.4 billion parameters per token and achieves results comparable to dense models in the 7 to 8 billion parameter range. Ling flash 2.0 maintains the 1/32 activation ratio with 100 billion total parameters and 6.1 billion active per token, offering a higher-capacity alternative without increasing computational cost per token. The flagship Ling 1T model scales this design to one trillion parameters, activating about 50 billion parameters per token, preserving sparsity and extending the Ling Scaling Laws to unprecedented sizes. This consistency enables predictable scaling of quality without the need for costly compute retuning.

Summary of Key Points

- The Ling 2.0 series is founded on a 1/32 activation sparse MoE architecture, optimized via Ling Scaling Laws to maintain 256 routed experts plus one shared expert across scales from 16 billion to 1 trillion parameters.

- The 16B model activates 1.4B parameters per token, matching the performance of 7B to 8B dense models while generating over 300 tokens per second in straightforward question-answering tasks on H20 hardware.

- The 100B Ling flash 2.0 model retains the same activation ratio, activating 6.1B parameters per token, providing a substantial capacity increase without raising per-token compute.

- The 1T parameter flagship model features about 50B active parameters per token, supports a 128K token context window, and incorporates advanced post-training techniques like evolutionary CoT and sentence-level policy optimization to enhance reasoning efficiency.

- Efficiency improvements across all models arise from the synergy of sparse activation, FP8 precision training, and a unified training schedule, enabling quality to scale predictably without additional compute tuning.

Final Thoughts

The Ling 2.0 series exemplifies a comprehensive and scalable sparse MoE approach to large language models. By identifying a 1/32 activation ratio as optimal and standardizing the architecture with 256 routed experts plus one shared expert, the design achieves remarkable efficiency and reasoning capabilities from tens of billions to trillion-parameter scales. The alignment of training regimes, context extension, and preference optimization with this sparse activation framework ensures that complex tasks like mathematics, coding, and long-context understanding are not compromised. Coupled with FP8 training and sophisticated pipeline parallelism, Ling 2.0 makes trillion-parameter reasoning models feasible on current hardware, signaling a shift towards fixed sparsity as a scalable alternative to ever-growing dense compute.