Is it possible for a compact late interaction retriever to index documents once and deliver precise cross-lingual search results with rapid inference? Liquid AI has introduced a streamlined late interaction retriever designed specifically for multilingual and cross-lingual search applications. This innovative system allows documents to be indexed in a single language while supporting queries in multiple languages, achieving high retrieval accuracy. The Liquid AI team highlights that its inference speed rivals that of models over twice as small, thanks to the efficient LFM2 architecture. The model is accessible via a Hugging Face demo and comes with a comprehensive model card, facilitating seamless integration into retrieval-augmented generation (RAG) frameworks.

Understanding Late Interaction and Its Significance

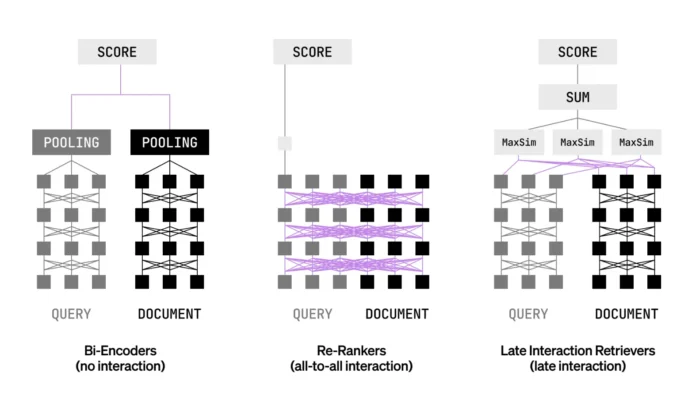

In many real-world search systems, there is a trade-off between speed and accuracy: bi-encoders offer fast processing but sometimes at the cost of precision, while cross-encoders provide superior accuracy but are computationally intensive. Late interaction retrieval bridges this gap by encoding queries and documents independently at the token level. At query time, token embeddings are compared using similarity functions like MaxSim, preserving detailed token-level interactions without the heavy computational burden of full cross-attention mechanisms. This approach enables pre-computation of document embeddings, enhancing efficiency, and improves ranking precision. Consequently, late interaction models can function both as initial retrievers and as rankers in a single pass.

Technical Overview of LFM2-ColBERT-350M

The LFM2-ColBERT-350M model comprises 350 million parameters distributed across 25 layers, including 18 convolutional blocks, 6 attention blocks, and a dense output layer. It supports an extensive context window of up to 32,000 tokens and utilizes a vocabulary size of 65,536 tokens. The model employs the MaxSim similarity function to compare token embeddings, producing output vectors of 128 dimensions. Training is conducted using BF16 precision, optimizing both speed and memory usage. The model is released under the LFM Open License v1.0, encouraging broad adoption and adaptation.

Multilingual and Cross-Lingual Capabilities

LFM2-ColBERT-350M supports eight primary languages: English, Arabic, Chinese, French, German, Japanese, Korean, and Spanish. Additionally, the evaluation framework incorporates Italian and Portuguese, expanding the cross-lingual testing matrix to nine languages. This comprehensive language coverage is crucial for deployments targeting diverse global markets, ensuring robust performance across various language pairs.

Evaluation Methodology and Performance Highlights

Liquid AI has extended the NanoBEIR benchmark by including Japanese and Korean datasets, making the evaluation more representative of global language diversity. On this enhanced benchmark, LFM2-ColBERT-350M demonstrates superior multilingual retrieval capabilities compared to the baseline late interaction model, GTE-ModernColBERT-v1, which has 150 million parameters. Notably, the model achieves significant improvements in German, Arabic, Korean, and Japanese retrieval tasks, while maintaining strong performance in English.

Summary of Key Advantages

- Token-level scoring via MaxSim retains detailed interactions between query and document tokens, while allowing separate encoding to enable efficient precomputation of document embeddings.

- The model supports indexing documents in one language and retrieving them using queries in multiple languages, covering eight supported languages with evaluations spanning nine.

- On the extended NanoBEIR multilingual benchmark, LFM2-ColBERT-350M outperforms the previous late interaction baseline (GTE-ModernColBERT-v1) and sustains high English retrieval accuracy.

- Inference speed matches that of models more than twice as small, a performance gain attributed to the optimized LFM2 backbone architecture.

Final Thoughts

Liquid AI’s LFM2-ColBERT-350M leverages late interaction with MaxSim to encode queries and documents separately, scoring token embeddings at query time. This design preserves fine-grained token interactions and enables scalable precomputation of document embeddings. Targeted at multilingual and cross-lingual retrieval scenarios, the model supports indexing once and querying in multiple languages, validated through an extended NanoBEIR benchmark. The reported inference speed, comparable to significantly smaller models, underscores the efficiency of the LFM2 backbone. Overall, this compact late interaction retriever is well-positioned for production deployment in multilingual retrieval-augmented generation systems.