Ant Group Unveils Ling-1T: A Trillion-Parameter AI Model Balancing Efficiency and Advanced Reasoning

Ant Group, a leading Chinese fintech powerhouse, has introduced Ling-1T, a trillion-parameter language model that sets a new benchmark in harmonizing computational efficiency with sophisticated reasoning capabilities. This open-source release, announced on October 9, marks a pivotal advancement in the company’s expanding AI ecosystem.

Ling-1T’s Remarkable Performance on Complex Mathematical Challenges

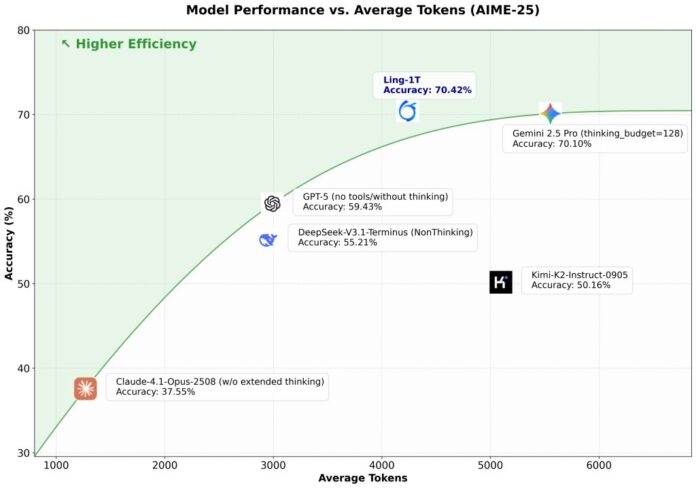

Ling-1T demonstrates impressive proficiency in tackling intricate mathematical problems, achieving a 70.42% accuracy rate on the 2025 American Invitational Mathematics Examination (AIME) benchmark. This exam is widely recognized as a rigorous standard for assessing AI systems’ problem-solving skills. Notably, Ling-1T sustains this high level of accuracy while generating over 4,000 output tokens per problem on average, positioning it among the elite AI models in terms of output quality and computational balance.

Innovative Dual-Track Strategy: Ling-1T and dInfer Framework

Alongside Ling-1T, Ant Group launched dInfer, a specialized inference framework tailored for diffusion language models. This dual release underscores the company’s commitment to exploring diverse AI architectures rather than relying on a single model type.

Diffusion language models differ fundamentally from the autoregressive models that power popular chatbots like ChatGPT. Instead of generating text sequentially, diffusion models produce outputs in parallel-a technique already well-established in image and video generation but relatively novel in natural language processing.

Performance evaluations reveal that dInfer significantly outperforms existing frameworks. For instance, on the HumanEval coding benchmark, Ant’s LLaDA-MoE diffusion model processed 1,011 tokens per second, vastly exceeding Nvidia’s Fast-dLLM (91 tokens/sec) and Alibaba’s Qwen-2.5-3B on vLLM (294 tokens/sec). This leap in efficiency highlights dInfer’s potential to accelerate research and practical applications in diffusion-based language models.

Ant Group’s researchers emphasize that dInfer serves as both a practical toolkit and a standardized platform, fostering rapid innovation in the emerging domain of diffusion large language models (dLLMs).

Expanding the AI Model Portfolio: Ling, Ring, and Ming Series

Ling-1T is part of a broader suite of AI models developed by Ant Group, reflecting a strategic diversification across multiple AI capabilities. The company’s lineup includes:

- Ling Series: Focused on conventional language tasks without complex reasoning.

- Ring Series: Engineered for advanced reasoning challenges, including the previously released Ring-1T-preview.

- Ming Series: Multimodal models capable of interpreting and generating content across images, text, audio, and video.

Additionally, Ant Group is experimenting with the LLaDA-MoE model, which utilizes a Mixture-of-Experts (MoE) architecture. This design activates only relevant model components for specific tasks, enhancing computational efficiency without sacrificing performance.

He Zhengyu, Ant Group’s Chief Technology Officer, articulated the company’s vision: “We see Artificial General Intelligence (AGI) as a collective achievement for humanity’s future. Our open-source releases, including Ling-1T and Ring-1T-preview, embody our commitment to transparent and collaborative AI development.”

Strategic Positioning Amidst Technological Constraints

Ant Group’s recent AI advancements come at a time when Chinese tech firms face limitations in accessing cutting-edge semiconductor technologies due to international export controls. This environment has intensified the focus on algorithmic innovation and software optimization as key competitive advantages.

In parallel, ByteDance unveiled its Seed Diffusion Preview model in July, reporting a fivefold speed increase over traditional autoregressive models. Such developments indicate a growing industry interest in alternative AI architectures that prioritize efficiency and scalability.

Despite these promising innovations, autoregressive models remain dominant in commercial applications, largely due to their proven effectiveness in natural language understanding and generation-critical for customer-facing services.

Open-Source Commitment and Future Prospects

By releasing Ling-1T and the dInfer framework as open-source projects, Ant Group is fostering a collaborative ecosystem that contrasts with the proprietary approaches of some competitors. This openness is designed to accelerate innovation and establish Ant’s technologies as foundational tools for the global AI community.

Moreover, Ant Group is developing AWorld, a framework aimed at enabling continuous learning for autonomous AI agents-systems capable of independently executing tasks on behalf of users. This initiative aligns with broader trends toward more adaptive and self-sufficient AI solutions.

The success of Ant Group’s AI ambitions will depend on real-world validation of their models’ performance and the extent to which developers adopt these tools as alternatives to established platforms. The open-source nature of Ling-1T is expected to facilitate this process by building an engaged user base invested in advancing the technology.

Overall, these developments highlight how major Chinese technology companies are navigating a dynamic AI landscape by embracing innovation across multiple fronts simultaneously.