Meta AI Unveils Advanced Frameworks for Evaluating Intelligent Agents in Dynamic Environments

Meta AI has launched two groundbreaking tools designed to elevate the development and assessment of AI agents: Agents Research Environments (ARE) and Gaia2. ARE is a flexible, modular simulation platform that facilitates the creation and execution of complex agent tasks, while Gaia2 serves as an advanced benchmark that tests agents in environments characterized by asynchronous, event-driven interactions with write capabilities. Together, these innovations push the boundaries of AI evaluation beyond traditional, linear task execution.

Transitioning from Sequential to Asynchronous Agent Interactions

Conventional AI benchmarks typically halt environmental changes while the agent processes information, creating an unrealistic pause in the simulation. ARE revolutionizes this approach by decoupling the agent’s reasoning time from the environment’s progression. This means the environment continues to evolve-triggering scheduled or random events such as notifications, reminders, or incoming messages-even as the agent deliberates. This setup challenges agents to demonstrate essential skills like proactive behavior, managing interruptions, and adhering to deadlines, which are often overlooked in synchronous testing frameworks.

Architectural Overview of the ARE Platform

At its core, ARE operates on a time-driven model where every interaction is treated as an event. The platform is organized around five fundamental components:

- Apps: Stateful interfaces representing tools the agent can interact with.

- Environments: Aggregations of apps, governing rules, and data that define the simulation context.

- Events: Recorded occurrences that drive the simulation forward.

- Notifications: Configurable signals that inform the agent about relevant changes or updates.

- Scenarios: Defined initial states combined with scheduled events and verification mechanisms.

Tools within ARE are categorized as either read-only or write-enabled, allowing precise validation of state-changing actions. The initial environment, named Mobile, emulates a smartphone ecosystem featuring apps like email, messaging, and calendar, providing a realistic testbed for agent capabilities.

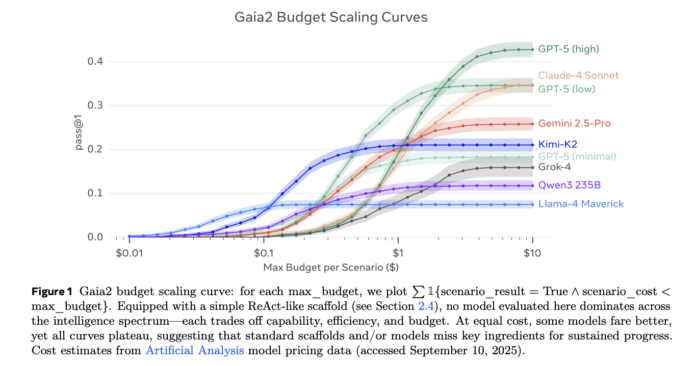

Gaia2: Benchmarking Agents in Realistic, Pressure-Driven Contexts

Gaia2 is designed to assess a broad spectrum of agent competencies under conditions that mirror real-world complexity. Key evaluation criteria include:

- Adaptability: How well agents respond to dynamic environmental feedback.

- Ambiguity Handling: Managing unclear or incomplete information effectively.

- Robustness to Noise: Maintaining performance despite irrelevant or misleading data.

- Time Sensitivity: Executing actions within strict temporal constraints.

- Multi-Agent Collaboration: Coordinating with sub-agents that simulate app functionalities.

Each scenario in Gaia2 is both verifiable and reproducible, utilizing deterministic seeds and oracle traces to ensure consistent benchmarking results.

Scope and Scale: Understanding the Gaia2 Scenario Dataset

The publicly available Gaia2 dataset comprises 800 distinct scenarios spread across 10 diverse universes. However, research experiments have employed an expanded set of 1,120 annotated and verifiable scenarios within the Mobile environment, reflecting enhanced configurations for more rigorous testing. Practitioners typically access the 800-scenario version via platforms like Hugging Face, while the extended suite demonstrates the benchmark’s scalability.

Evaluating Agent Performance Amidst a Continuously Changing World

Gaia2’s evaluation methodology focuses on analyzing sequences of write actions performed by agents, comparing them against oracle-defined actions with meticulous argument-level verification. Depending on the nature of the arguments, comparisons are conducted using either strict exact matching (hard checks) or more flexible assessments via large language model judges (soft checks). This approach preserves causality and respects relative timing constraints, preventing misleading conclusions that could arise from judging agents solely by their final states, especially when intermediate steps may violate safety or policy guidelines.

Conclusion: Setting a New Standard for AI Agent Evaluation

The combination of ARE and Gaia2 marks a paradigm shift from evaluating agents based on static correctness to assessing their performance under continuous change and uncertainty. For AI systems aspiring to real-world deployment, mastering asynchronous interactions, navigating ambiguity, tolerating noise, managing timing pressures, and engaging in multi-agent coordination are critical. This release delivers a controllable simulation environment, a challenging and scalable benchmark, and a transparent evaluation framework designed to rigorously test these capabilities.