Imagine delegating your routine browser tasks to an intelligent agent capable of planning and executing predefined user interface (UI) operations autonomously. Google AI has unveiled a cutting-edge model, a specialized iteration of Gemini 2.5, designed to perform genuine UI interactions within live web browsers through a controlled action API. Currently accessible in public preview via Google AI Studio and Vertex AI, this model is tailored for web automation and UI testing. It demonstrates documented improvements validated by human evaluators on standard web and mobile control benchmarks, incorporating a safety mechanism that prompts user approval for sensitive or high-risk actions.

Introducing the Model’s Core Capabilities

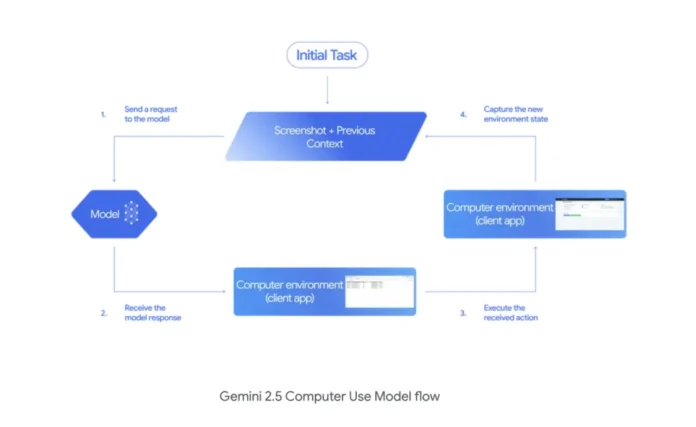

Developers interact with the model through a novel computer_use tool that outputs function calls such as click_at, type_text_at, and drag_and_drop. These commands are executed by client-side frameworks like Playwright or Browserbase, which then capture updated screenshots or URLs to feed back into the system. This loop continues until the task is completed or halted by safety protocols. The model supports a predefined set of 13 UI actions, including open_web_browser, wait_5_seconds, go_back, go_forward, search, navigate, click_at, hover_at, type_text_at, key_combination, scroll_document, scroll_at, and drag_and_drop. Additionally, it can be extended with custom commands like open_app, long_press_at, or go_home to support interactions beyond browsers, such as mobile or desktop environments.

Scope, Limitations, and Safety Features

This model is primarily optimized for web browser environments. While it currently lacks full optimization for desktop operating system-level controls, it adapts to mobile contexts by integrating custom actions within the same execution loop. A robust safety monitor is embedded to prevent unauthorized or potentially harmful operations, such as financial transactions, message sending, or accessing confidential information, by either blocking these actions outright or requiring explicit user confirmation before proceeding.

Performance Benchmarks and Accuracy

- Online-Mind2Web Benchmark: Achieves a 69.0% pass@1 rate based on majority-vote human assessments, as verified by benchmark authorities.

- Browserbase Evaluation: Outperforms competing computer-use APIs in both accuracy and response time on the Online-Mind2Web and WebVoyager benchmarks under identical testing conditions, with reported scores of 65.7% and 79.9% respectively.

- Latency vs. Quality Trade-off: Demonstrates approximately 70% accuracy with a median latency of around 225 seconds on the Browserbase Online-Mind2Web test harness, according to Google’s human-evaluated data.

- Mobile Adaptation (AndroidWorld): Shows a 69.7% success rate by employing the same API loop enhanced with custom mobile-specific actions, excluding browser commands.

Real-World Applications and Early Feedback

- Automated UI Test Recovery: Google’s payments platform team reports that the model successfully restores functionality in over 60% of previously failing automated UI tests, significantly improving test reliability.

- Efficiency Gains: Early external users like Poke.com have observed workflow accelerations of nearly 50% compared to their previous best automation solutions.

Summary and Outlook

Gemini 2.5 Computer Use, now available for public preview through Google AI Studio and Vertex AI, offers a constrained yet powerful API featuring 13 documented UI actions, requiring a client-side executor to operate. Its state-of-the-art performance on web and mobile control benchmarks, combined with leading latency metrics demonstrated in Browserbase’s matched harness, positions it as a promising tool for UI testing and web operations automation. The model’s browser-centric design, coupled with integrated safety checks, ensures controlled and secure task execution, making it a valuable asset for developers seeking to streamline complex UI workflows.