Building an Interactive Machine Learning Pipeline with LangChain and XGBoost

This guide demonstrates how to seamlessly integrate the robust predictive capabilities of XGBoost with the conversational AI framework LangChain. We develop a comprehensive pipeline that synthesizes datasets, trains an XGBoost classifier, assesses its performance, and visualizes critical insights-all coordinated through modular LangChain components. This approach highlights how conversational AI can effectively manage the entire machine learning lifecycle, making the process both interactive and interpretable.

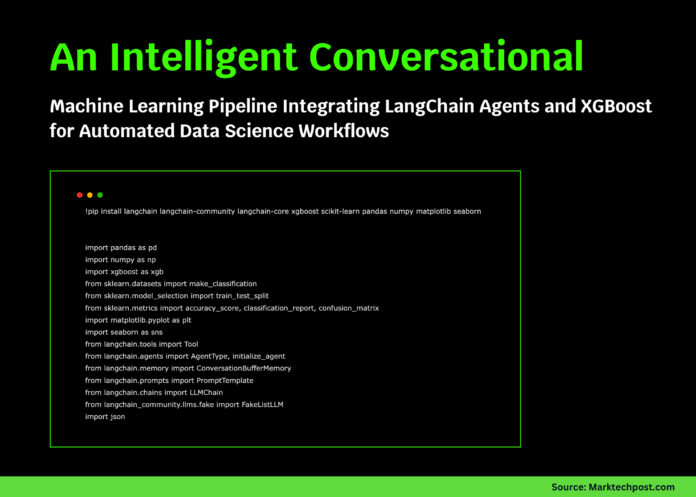

Setting Up the Environment and Dependencies

To begin, install and import the necessary libraries. LangChain facilitates the conversational agent framework, while XGBoost and scikit-learn handle the machine learning tasks. Pandas, NumPy, Matplotlib, and Seaborn support data manipulation and visualization.

!pip install langchain langchain-community langchain-core xgboost scikit-learn pandas numpy matplotlib seaborn

import pandas as pd

import numpy as np

import xgboost as xgb

from sklearn.datasets import makeclassification

from sklearn.modelselection import traintestsplit

from sklearn.metrics import accuracyscore, classificationreport, confusionmatrix

import matplotlib.pyplot as plt

import seaborn as sns

from langchain.tools import Tool

from langchain.agents import AgentType, initializeagent

from langchain.memory import ConversationBufferMemory

from langchain.prompts import PromptTemplate

from langchain.chains import LLMChain

from langchaincommunity.llms.fake import FakeListLLM

import json

Data Generation and Preprocessing with DataManager

The DataManager class is designed to create synthetic classification datasets and prepare them for modeling. Utilizing scikit-learn’s makeclassification, it generates data with configurable sample size and feature count, then splits it into training and testing subsets. Additionally, it provides a detailed summary of the dataset, including sample counts and class distributions.

class DataManager:

"""Handles synthetic dataset creation and preprocessing."""

def init(self, nsamples=1000, nfeatures=20, randomstate=42):

self.nsamples = nsamples

self.nfeatures = nfeatures

self.randomstate = randomstate

self.Xtrain, self.Xtest, self.ytrain, self.ytest = None, None, None, None

self.featurenames = [f'feature{i}' for i in range(nfeatures)]

def generatedata(self):

"""Create a synthetic classification dataset."""

X, y = makeclassification(

nsamples=self.nsamples,

nfeatures=self.nfeatures,

ninformative=15,

nredundant=5,

randomstate=self.randomstate

)

self.Xtrain, self.Xtest, self.ytrain, self.ytest = traintestsplit(

X, y, testsize=0.2, randomstate=self.randomstate

)

return f"Generated dataset with {self.Xtrain.shape[0]} training samples and {self.Xtest.shape[0]} testing samples."

def getdatasummary(self):

"""Provide a summary of dataset statistics."""

if self.Xtrain is None:

return "Data has not been generated yet. Please run generatedata() first."

summary = {

"trainingsamples": self.Xtrain.shape[0],

"testingsamples": self.Xtest.shape[0],

"featurecount": self.Xtrain.shape[1],

"classdistribution": {

"training": {0: int(np.sum(self.ytrain == 0)), 1: int(np.sum(self.ytrain == 1))},

"testing": {0: int(np.sum(self.ytest == 0)), 1: int(np.sum(self.ytest == 1))}

}

}

return json.dumps(summary, indent=2)

Comprehensive Model Management with XGBoostManager

The XGBoostManager class encapsulates the entire lifecycle of the XGBoost model-from training to evaluation and interpretation. It fits an XGBoost classifier with customizable hyperparameters, calculates key performance metrics such as accuracy, precision, recall, and F1-score, and identifies the most influential features. The class also offers rich visualizations including confusion matrices, feature importance bar charts, prediction distributions, and a simulated learning curve to provide deeper insights.

class XGBoostManager:

"""Encapsulates training, evaluation, and visualization of an XGBoost model."""

def init(self):

self.model = None

self.predictions = None

self.accuracy = None

def trainmodel(self, Xtrain, ytrain, params=None):

"""Train the XGBoost classifier with specified parameters."""

if params is None:

params = {

'maxdepth': 6,

'learningrate': 0.1,

'nestimators': 100,

'objective': 'binary:logistic',

'randomstate': 42

}

self.model = xgb.XGBClassifier(*params)

self.model.fit(Xtrain, ytrain)

return f"Model trained with {params['nestimators']} boosting rounds."

def evaluatemodel(self, Xtest, ytest):

"""Assess model performance on test data."""

if self.model is None:

return "Model has not been trained yet."

self.predictions = self.model.predict(Xtest)

self.accuracy = accuracyscore(ytest, self.predictions)

report = classificationreport(ytest, self.predictions, outputdict=True)

metrics = {

"accuracy": float(self.accuracy),

"precision": float(report['1']['precision']),

"recall": float(report['1']['recall']),

"f1score": float(report['1']['f1-score'])

}

return json.dumps(metrics, indent=2)

def getfeatureimportance(self, featurenames, topn=10):

"""Retrieve the top N features ranked by importance."""

if self.model is None:

return "Model has not been trained yet."

importancescores = self.model.featureimportances

importancedf = pd.DataFrame({

'feature': featurenames,

'importance': importancescores

}).sortvalues(by='importance', ascending=False)

return importancedf.head(topn).tostring(index=False)

def visualizeresults(self, Xtest, ytest, featurenames):

"""Generate visual plots to interpret model outcomes."""

if self.model is None:

print("Model training required before visualization.")

return

fig, axes = plt.subplots(2, 2, figsize=(16, 12))

# Confusion Matrix

cm = confusionmatrix(ytest, self.predictions)

sns.heatmap(cm, annot=True, fmt='d', cmap='Blues', ax=axes[0, 0])

axes[0, 0].settitle('Confusion Matrix')

axes[0, 0].setxlabel('Predicted Label')

axes[0, 0].setylabel('True Label')

# Feature Importance

importance = self.model.featureimportances

topindices = np.argsort(importance)[-topn:]

axes[0, 1].barh(range(topn), importance[topindices])

axes[0, 1].setyticks(range(topn))

axes[0, 1].setyticklabels([featurenames[i] for i in topindices])

axes[0, 1].settitle('Top 10 Feature Importances')

axes[0, 1].setxlabel('Importance Score')

# True vs Predicted Distribution

axes[1, 0].hist([ytest, self.predictions], label=['Actual', 'Predicted'], bins=2, color=['skyblue', 'salmon'])

axes[1, 0].settitle('Distribution of True vs Predicted Labels')

axes[1, 0].setxticks([0, 1])

axes[1, 0].legend()

# Simulated Learning Curve

trainsizes = [0.2, 0.4, 0.6, 0.8, 1.0]

trainaccuracies = [0.68, 0.77, 0.83, 0.87, 0.91]

axes[1, 1].plot(trainsizes, trainaccuracies, marker='o', linestyle='-', color='green')

axes[1, 1].settitle('Simulated Learning Curve')

axes[1, 1].setxlabel('Proportion of Training Data')

axes[1, 1].setylabel('Accuracy')

axes[1, 1].grid(True)

plt.tightlayout()

plt.show()

Integrating Machine Learning Operations into LangChain Tools

To enable conversational control over the ML pipeline, we encapsulate key functions into LangChain tools. These tools allow an AI agent to generate data, summarize datasets, train models, evaluate performance, and analyze feature importance-all through natural language commands.

def createmlagent(datamanager, xgbmanager):

"""Wrap ML functions as LangChain tools for conversational interaction."""

tools = [

Tool(

name="GenerateData",

func=lambda : datamanager.generatedata(),

description="Create a synthetic dataset for model training. No input required."

),

Tool(

name="DataSummary",

func=lambda : datamanager.getdatasummary(),

description="Retrieve summary statistics of the current dataset."

),

Tool(

name="TrainModel",

func=lambda : xgbmanager.trainmodel(datamanager.Xtrain, datamanager.ytrain),

description="Train the XGBoost model using the generated dataset."

),

Tool(

name="EvaluateModel",

func=lambda : xgbmanager.evaluatemodel(datamanager.Xtest, datamanager.ytest),

description="Evaluate the trained model's performance on test data."

),

Tool(

name="FeatureImportance",

func=lambda : xgbmanager.getfeatureimportance(datamanager.featurenames, topn=10),

description="List the top 10 features contributing to the model."

)

]

return tools

Executing the End-to-End Pipeline

The runtutorial() function orchestrates the entire process, guiding the user through dataset creation, model training, evaluation, and visualization. It also prints key insights to reinforce understanding of the workflow.

def runtutorial():

"""Run the full LangChain and XGBoost integration tutorial."""

print("=" 80)

print("LANGCHAIN & XGBOOST INTEGRATION WORKFLOW")

print("=" 80)

datamgr = DataManager(nsamples=1000, nfeatures=20)

xgbmgr = XGBoostManager()

tools = createmlagent(datamgr, xgbmgr)

print("nStep 1: Generating Synthetic Dataset...")

print(tools[0].func(""))

print("nStep 2: Dataset Overview:")

print(tools[1].func(""))

print("nStep 3: Training the XGBoost Model...")

print(tools[2].func(""))

print("nStep 4: Model Evaluation Results:")

print(tools[3].func(""))

print("nStep 5: Identifying Key Features:")

print(tools[4].func(""))

print("nStep 6: Visualizing Model Performance...")

xgbmgr.visualizeresults(datamgr.Xtest, datamgr.ytest, datamgr.featurenames)

print("n" + "=" 80)

print("WORKFLOW COMPLETED SUCCESSFULLY!")

print("=" * 80)

print("nSummary of Learnings:")

print("- LangChain tools enable conversational control over ML tasks.")

print("- XGBoost excels in gradient boosting for classification.")

print("- Agent-driven pipelines simplify complex ML workflows.")

print("- Visualization aids in interpreting model behavior.")

if name == "main":

runtutorial()

Final Thoughts

This tutorial showcases a fully operational machine learning pipeline that merges LangChain’s conversational agent framework with the predictive power of XGBoost. By leveraging LangChain as an interactive interface, users can intuitively perform complex ML operations such as data synthesis, model training, and evaluation through natural language. This fusion of large language model orchestration and machine learning not only streamlines experimentation but also enhances transparency and accessibility in data science workflows.