Salesforce AI Research has introduced CoDA-1.7B, an innovative diffusion-based language model designed specifically for code generation. Unlike traditional autoregressive models that predict tokens sequentially from left to right, CoDA employs a unique approach by denoising entire sequences simultaneously using bidirectional context, allowing it to update multiple tokens in parallel. The team has released both Base and Instruct model checkpoints, along with a comprehensive end-to-end framework for training, evaluation, and deployment.

Architecture and Training Methodology

At its core, CoDA leverages a 1.7 billion parameter architecture adapted for discrete diffusion processes applied to text sequences. This involves iteratively refining masked sequences through full-sequence attention mechanisms, enabling the model to perform native infilling and non-autoregressive decoding. The training pipeline is structured into three distinct phases: initial pre-training with bidirectional masking, followed by supervised fine-tuning, and culminating in a progressive denoising strategy during inference. The release includes reproducible scripts optimized for TPU-based pre-training, GPU fine-tuning, and thorough evaluation.

Distinctive Features of CoDA-1.7B

- Bidirectional Contextual Generation: The diffusion denoising process eliminates the need for a fixed token generation order, allowing the model to consider the entire sequence context simultaneously.

- Confidence-Guided Sampling: Employing an entropy-based decoding technique, CoDA offers a flexible balance between output quality and generation speed.

- Open-Source Training Pipeline: The release includes deployment scripts and a command-line interface (CLI) to facilitate seamless model training and serving.

Benchmark Performance and Comparisons

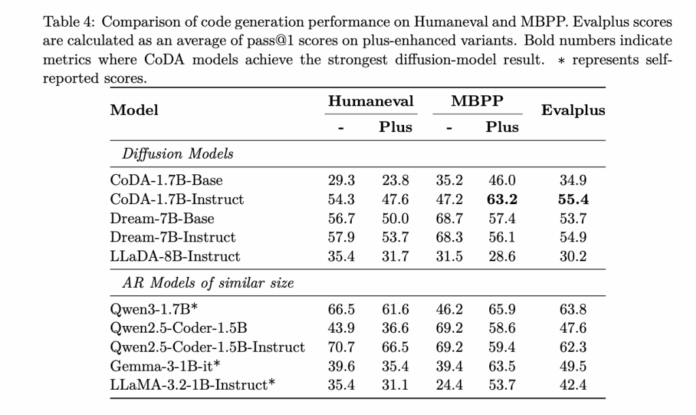

CoDA-1.7B-Instruct demonstrates strong results across popular code generation benchmarks, achieving pass@1 scores of 54.3% on HumanEval, 47.6% on HumanEval+, 47.2% on MBPP, 63.2% on MBPP+, and an aggregate 55.4% on EvalPlus. Notably, despite its relatively modest 1.7B parameter size, CoDA competes closely with larger diffusion models such as Dream-7B-Instruct, which scores 57.9% on HumanEval. This efficiency highlights CoDA’s ability to deliver competitive performance with fewer computational resources.

Inference Dynamics and Efficiency

The computational cost during generation is primarily influenced by the number of diffusion steps executed. CoDA provides several adjustable parameters-such as STEPS, ALG="entropy", ALG_TEMP, and block length-that allow users to fine-tune the trade-off between latency and output quality. By updating tokens in parallel under full attention, CoDA achieves reduced wall-clock latency compared to larger diffusion models when operating under similar step constraints, making it well-suited for applications requiring faster response times.

Deployment Options and Licensing

The model is accompanied by a FastAPI server that supports OpenAI-compatible APIs, alongside an interactive CLI for local inference. Detailed setup instructions and a convenient start_server.sh script simplify deployment. All model artifacts, including checkpoints, are hosted on Hugging Face and are available under the Creative Commons BY-NC 4.0 license, encouraging non-commercial use and further research.

Summary and Insights

CoDA-1.7B represents a significant advancement in discrete diffusion-based code generation at a smaller scale. Its combination of bidirectional denoising, parallel token updates, and a fully reproducible training-to-serving pipeline makes it a valuable reference model. The competitive benchmark results, especially when compared to larger 7B-parameter diffusion models, underscore its efficiency and effectiveness. Moreover, the ability to control inference latency through configurable decoding parameters offers practical benefits for real-world deployment scenarios. The open availability of weights and deployment tools further supports adoption and experimentation within the developer community.