Failures in Retrieval-Augmented Generation (RAG) systems predominantly stem from the retrieval phase rather than the generation step. Traditional text-centric workflows convert PDFs into plain text, which often strips away crucial layout information, table formats, and figure references. This degradation in document structure leads to diminished recall and precision even before large language models (LLMs) process the data. Vision-RAG, which retrieves rendered document pages using vision-language embeddings, directly addresses these challenges and demonstrates significant improvements across visually complex datasets.

Understanding Pipeline Architectures and Their Limitations

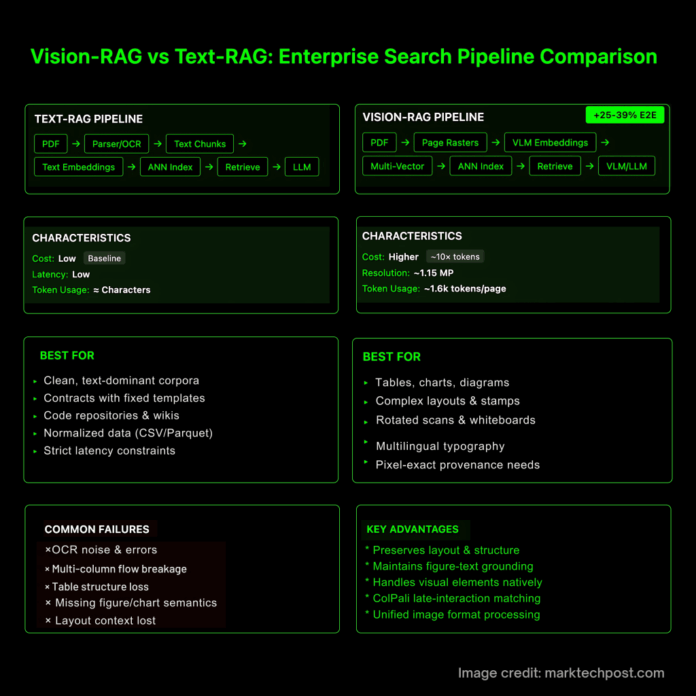

Text-based RAG pipelines: These typically involve converting PDFs through OCR or parsers into text segments, generating text embeddings, indexing with approximate nearest neighbor (ANN) methods, and then retrieving relevant chunks for LLM consumption. Common pitfalls include OCR inaccuracies, disruption of multi-column layouts, loss of table cell hierarchies, and missing semantic links to figures or charts. These issues are well-documented in benchmarks focused on table understanding and document visual question answering (DocVQA).

Vision-based RAG pipelines: Instead of text extraction, these systems rasterize PDF pages into images, generate embeddings via vision-language models (VLMs)-often using multi-vector representations with late-interaction scoring-and index these for retrieval. The retrieved high-fidelity page crops or full pages are then fed into VLMs or LLMs. This approach preserves the original layout and figure-text relationships, as validated by recent frameworks such as ColPali, VisRAG, and VDocRAG.

Empirical Evidence Supporting Vision-RAG

- Effectiveness and simplicity of document-image retrieval: ColPali’s method of embedding page images combined with late-interaction matching outperforms state-of-the-art text-based pipelines on the ViDoRe benchmark, while maintaining end-to-end trainability.

- Quantifiable end-to-end performance gains: VisRAG reports a substantial 25-39% improvement in overall pipeline accuracy on multimodal documents when both retrieval and generation utilize VLMs.

- Unified image representation enhances generalization: VDocRAG demonstrates that maintaining documents in a consistent image format-including tables, charts, and presentations-avoids parser-induced information loss and boosts adaptability. It also introduces OpenDocVQA, a new benchmark for evaluation.

- Resolution critically impacts reasoning: Advanced VLMs with high-resolution capabilities (e.g., Qwen2-VL and Qwen2.5-VL) achieve state-of-the-art results on DocVQA, MathVista, and MTVQA datasets, highlighting the importance of preserving fine details such as ticks, superscripts, stamps, and small fonts.

Cost Considerations: Vision Inputs and Token Inflation

Vision-based inputs significantly increase token counts due to tiling strategies rather than per-token cost alone. For GPT-4o-class models, the total token count approximates the sum of base tokens plus the product of tile tokens and the number of tiles. Consequently, processing one to two megapixel pages can be roughly ten times more expensive than handling a small text chunk. Anthropic recommends a token cap equivalent to about 1.15 megapixels (~1,600 tokens) to maintain responsiveness. Google’s Gemini 2.5 Flash-Lite model prices text, images, and video uniformly per token, but large images still consume substantially more tokens. This necessitates engineering strategies such as selective fidelity, prioritizing crops over downsampling or full-page inputs to balance cost and quality.

Best Practices for Deploying Vision-RAG in Production

- Ensure cross-modal embedding alignment: Utilize encoders trained for joint text-image representation (e.g., CLIP-family or specialized VLM retrievers). Implement dual-index systems combining inexpensive text recall for broad coverage with vision-based reranking for precision. ColPali’s late-interaction (MaxSim-style) approach is a robust default for page image retrieval.

- Apply selective high-fidelity input feeding: Adopt a coarse-to-fine retrieval strategy-initially use BM25 or DPR to shortlist top-k pages, then apply a vision reranker, and finally pass only regions of interest (tables, charts, stamps) to the generator. This approach preserves critical visual details while controlling token inflation.

- Design for real-world document complexities:

• Tables: When parsing is necessary, leverage table-structure models like PubTables-1M or TATR; otherwise, prefer image-native retrieval to avoid structural loss.

• Charts and diagrams: Maintain resolution sufficient to capture tick marks and legends; validate on chart-specific VQA datasets.

• Whiteboards, rotated scans, and multilingual content: Rendering pages as images circumvents many OCR failures, supporting diverse scripts and orientations.

• Provenance tracking: Store page hashes and crop coordinates alongside embeddings to enable exact reproduction of visual evidence used in answers.

Comparative Overview: Text-RAG vs. Vision-RAG

| Aspect | Text-RAG | Vision-RAG |

|---|---|---|

| Ingestion Process | PDF → OCR/parser → text chunks → text embeddings → ANN index | PDF → page rasterization → VLM embeddings (multi-vector, late interaction) → ANN index |

| Common Failure Points | OCR errors, multi-column disruption, table structure loss, missing figure semantics | Resolution limits, tiling artifacts, cross-modal misalignment |

| Retriever Representation | Single-vector text embeddings; lexical or cross-encoder reranking | Page-image embeddings with late interaction (MaxSim) capturing local regions |

| End-to-End Performance Gains | Baseline | +25-39% improvement on multimodal documents (VisRAG) |

| Ideal Use Cases | Clean, text-heavy corpora; low latency and cost-sensitive tasks | Visually complex documents with tables, charts, stamps, rotated scans, and multilingual text |

| Resolution Sensitivity | Limited to OCR quality | High resolution critical for fine details; supported by advanced VLMs |

| Cost Model | Token count proportional to characters; generally inexpensive | Token count grows with tiling; higher costs but better fidelity |

| Cross-Modal Alignment | Not required | Essential for mixed queries; ensures geometry consistency between text and images |

| Key Benchmarks | DocVQA, PubTables-1M | ViDoRe, VisRAG, VDocRAG, OpenDocVQA |

| Evaluation Strategy | Information retrieval metrics plus text QA; may overlook figure-text grounding | Joint retrieval and generation on visually rich datasets capturing layout and crop relevance |

| Operational Workflow | Single-stage retrieval; scalable and cost-effective | Coarse-to-fine: text recall → vision rerank → ROI crops; balances cost and fidelity |

| Recommended Scenarios | Standardized contracts, code repositories, normalized tabular data (CSV, Parquet) | Enterprise documents with complex layouts, compliance workflows requiring pixel-level provenance |

| Notable Implementations | DPR, BM25 with cross-encoder reranking | ColPali (ICLR 2025), VisRAG pipeline, VDocRAG unified image framework |

When to Opt for Text-RAG

- Datasets dominated by clean, structured text such as templated contracts, wikis, or source code repositories.

- Applications with stringent latency or budget constraints requiring rapid, low-cost responses.

- Scenarios where data is already normalized into structured formats like CSV or Parquet, eliminating the need for pixel-based retrieval.

Holistic Evaluation: Integrating Retrieval and Generation Metrics

Incorporate multimodal RAG benchmarks into your evaluation framework to capture nuanced failure modes. Examples include M²RAG for multi-modal question answering, captioning, fact verification, and reranking; REAL-MM-RAG for real-world multimodal retrieval scenarios; and RAG-Check, which assesses both relevance and factual correctness in multimodal contexts. These benchmarks identify issues such as irrelevant image crops or mismatches between figures and text that traditional text-only metrics often overlook.

Conclusion

While Text-RAG remains a cost-effective and efficient choice for clean, text-centric datasets, Vision-RAG emerges as the superior approach for enterprise documents rich in visual elements like tables, charts, stamps, rotated scans, and multilingual typography. Organizations that (1) harmonize text and image embeddings, (2) strategically provide high-fidelity visual inputs, and (3) rigorously evaluate with multimodal benchmarks consistently achieve enhanced retrieval accuracy and improved downstream generation quality. These benefits are substantiated by recent advances such as ColPali’s vision retriever, VisRAG’s documented 25-39% end-to-end performance boost, and VDocRAG’s unified image processing framework.