In the realm of real-time communication-such as live customer support, simultaneous dubbing, and instant translation-every millisecond counts. Traditional streaming Text-to-Speech (TTS) systems often introduce a noticeable pause before audio playback begins, as they wait to accumulate a segment of text before generating sound. Addressing this latency challenge, VoXtream, developed by KTH’s Speech, Music and Hearing group, revolutionizes the process by initiating speech immediately after receiving the first word. It produces audio in rapid 80-millisecond frames and achieves an impressive first-packet latency (FPL) of just 102 milliseconds on modern GPUs using PyTorch compilation.

Understanding Full-Stream TTS Versus Output-Streaming

Most conventional output-streaming TTS models require the entire input text before starting audio synthesis, causing a delay as the system buffers the full sentence or phrase. In contrast, full-stream TTS systems like VoXtream process text incrementally, word by word, as it arrives from a language model, generating audio in real time without waiting for the complete input. This approach eliminates input-side buffering and maintains low computational overhead per audio frame, focusing on minimizing the delay from the very first word rather than just optimizing steady-state throughput.

How VoXtream Achieves Immediate Speech Generation

The key innovation behind VoXtream’s rapid onset is its use of a dynamic phoneme look-ahead mechanism embedded within an incremental Phoneme Transformer (PT). While the PT can peek ahead up to 10 phonemes to ensure natural prosody and intonation, it does not delay speech generation waiting for this context. Instead, it begins producing audio as soon as the first word is available, avoiding the fixed look-ahead windows that typically introduce latency in other systems.

Architectural Overview: The Three-Transformer Pipeline

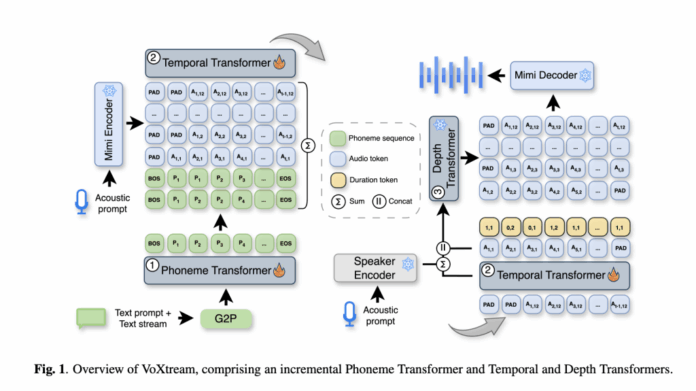

VoXtream’s architecture is a fully autoregressive (AR) pipeline composed of three specialized transformers working in sequence:

- Phoneme Transformer (PT): An incremental, decoder-only model that converts incoming words into phonemes with a flexible look-ahead of up to 10 phonemes, using g2pE for word-level phonemization.

- Temporal Transformer (TT): An AR predictor that generates Mimi codec semantic tokens along with duration tokens, which encode phoneme-to-audio alignment in a monotonic fashion. Mimi operates at 12.5 Hz, corresponding to 80 ms audio frames.

- Depth Transformer (DT): This AR generator produces the remaining acoustic codebooks of the Mimi codec, conditioned on TT outputs and a ReDimNet speaker embedding, enabling zero-shot voice adaptation. The Mimi decoder then reconstructs the waveform frame-by-frame, allowing continuous audio output.

The Mimi codec’s dual-stream tokenization and streaming design are well-established, with VoXtream leveraging the first codebook for semantic context and the others for high-fidelity audio reconstruction.

Performance in Real-World Conditions

VoXtream’s efficiency is not just theoretical. Benchmarking on NVIDIA A100 GPUs shows a first-packet latency of 171 ms without compilation and an optimized 102 ms with PyTorch compilation, achieving real-time factors (RTF) of 1.00 and 0.17 respectively. On the more accessible RTX 3090, it records 205 ms FPL uncompiled and 123 ms compiled, with RTFs of 1.19 and 0.19. These results demonstrate VoXtream’s capability to operate faster than real time, a critical requirement for live applications.

Comparative Advantages Over Existing Streaming TTS Models

When evaluated on the LibriSpeech-long dataset under full-stream conditions-where text input arrives incrementally-VoXtream outperforms popular models like CosyVoice2, achieving a lower word error rate (WER) of 3.24% compared to 6.11%. Listener studies reveal a strong preference for VoXtream’s naturalness (p ≤ 5e-10), although CosyVoice2 maintains an edge in speaker similarity due to its flow-matching decoder design. Importantly, VoXtream boasts the lowest first-packet latency among publicly available streaming TTS systems and, with compilation, runs over five times faster than real time.

Why Autoregressive Design Excels in Reducing Latency

Unlike diffusion or flow-based vocoders that generate audio in larger chunks-introducing inherent delays-VoXtream’s fully autoregressive and frame-synchronous pipeline (PT → TT → DT → Mimi decoder) produces the initial 80 ms audio packet after a single pass through the model stack. This design avoids multi-step sampling processes that typically increase latency. Prior approaches using non-autoregressive (NAR) flow-matching decoders, such as those in IST-LM and CosyVoice2, struggle to achieve similarly low first-packet latencies despite their high offline quality.

Training Data and Quality Assurance

VoXtream was trained on a carefully curated mid-scale dataset totaling approximately 9,000 hours, combining 4,500 hours from the Emilia corpus and 4,500 hours from the HiFiTTS-2 22 kHz subset. The dataset underwent rigorous preprocessing, including speaker diarization to exclude multi-speaker segments, transcript filtering via automatic speech recognition (ASR), and quality assessment using the NISQA metric to remove low-quality audio. All audio was resampled to 24 kHz. Detailed documentation covers the preprocessing pipeline, including Mimi tokenization, Montreal Forced Aligner (MFA) alignments, duration labeling, and speaker embedding templates.

Robustness of Quality Metrics Beyond Select Samples

Extensive evaluations on zero-shot TTS benchmarks such as SEED-TTS test-en and LibriSpeech test-clean confirm VoXtream’s competitive performance in WER, UTMOS (a MOS predictor), and speaker similarity. Ablation studies highlight that incorporating the CSM Depth Transformer and speaker encoder significantly enhances speaker similarity without compromising WER. Subjective listening tests employed MUSHRA-like protocols and specialized preference tests tailored for full-stream generation, reinforcing the model’s real-world applicability.

Positioning VoXtream in the Modern TTS Ecosystem

VoXtream represents a significant advancement in the landscape of streaming TTS by combining autoregressive modeling with a novel duration-token alignment strategy that supports true input-side streaming. Unlike approaches relying on massive models or new codecs, its primary innovation lies in optimizing latency without sacrificing quality. For developers building live interactive agents, VoXtream offers a compelling trade-off: a slight reduction in speaker similarity in exchange for an order-of-magnitude decrease in first-packet latency compared to chunked non-autoregressive vocoders under full-stream conditions.