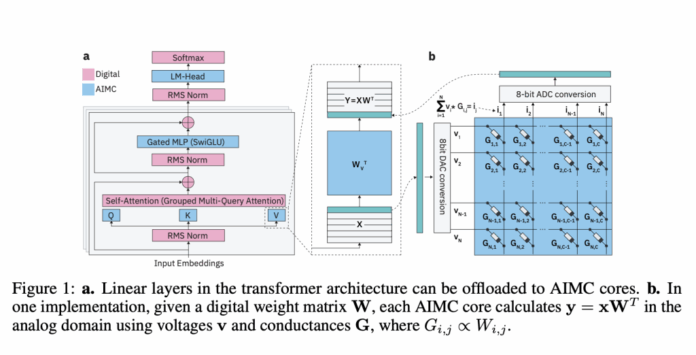

Researchers at IBM, in collaboration with ETH Zürich, have introduced an innovative category of Analog Foundation Models (AFMs) aimed at seamlessly integrating large language models (LLMs) with Analog In-Memory Computing (AIMC) hardware. AIMC technology promises a transformative boost in computational efficiency by enabling billion-parameter models to operate within a compact footprint suitable for embedded and edge devices. This is achieved through dense non-volatile memory (NVM) that merges storage and computation. However, a significant challenge has been the inherent noise in analog computations: executing matrix-vector multiplications directly within NVM arrays introduces unpredictable errors that conventional models cannot tolerate.

The Significance of Analog Computing for Large Language Models

Traditional accelerators like GPUs and TPUs rely on shuttling data between separate memory and processing units, which creates a bottleneck known as the von Neumann bottleneck. AIMC circumvents this by performing matrix-vector multiplications directly inside memory arrays, drastically enhancing throughput and energy efficiency. Recent advancements suggest that combining AIMC with 3D NVM technology and Mixture-of-Experts (MoE) architectures could enable trillion-parameter models to run on compact, low-power accelerators. This breakthrough could democratize access to foundation-scale AI, extending its reach far beyond centralized data centers to edge devices such as smartphones and IoT sensors.

Challenges in Deploying Analog In-Memory Computing

The primary obstacle in practical AIMC deployment is noise-induced variability. Factors such as device inconsistencies, digital-to-analog and analog-to-digital conversion quantization, and runtime fluctuations introduce stochastic errors that degrade model accuracy. Unlike digital quantization errors, which are predictable and manageable, analog noise is random and difficult to compensate for. While earlier efforts successfully adapted smaller neural networks like CNNs and RNNs (with fewer than 100 million parameters) to tolerate such noise, scaling these techniques to billion-parameter LLMs has proven elusive.

Innovative Solutions Offered by Analog Foundation Models

The IBM and ETH Zürich team developed Analog Foundation Models that incorporate hardware-aware training strategies to prepare LLMs for the noisy environment of analog execution. Their approach includes:

- Simulated noise injection during training to mimic AIMC hardware randomness.

- Iterative weight clipping to maintain weight distributions within the operational limits of analog devices.

- Learned static quantization ranges for inputs and outputs, tailored to real hardware constraints.

- Knowledge distillation from pre-trained LLMs using a synthetic dataset of 20 billion tokens.

Implemented via the AIHWKIT-Lightning framework, these techniques enable models such as Phi-3-mini-4k-instruct and Llama-3.2-1B-Instruct to achieve performance on par with weight-quantized 4-bit and activation 8-bit baselines, even under analog noise conditions. Benchmark evaluations across reasoning and factual tasks demonstrate that AFMs outperform traditional quantization-aware training (QAT) and post-training quantization methods like SpinQuant.

Versatility Beyond Analog Hardware

Interestingly, AFMs also exhibit strong performance on low-precision digital hardware. Their training to withstand noise and clipping enables them to better handle simple post-training round-to-nearest (RTN) quantization compared to existing approaches. This cross-compatibility broadens their applicability, making AFMs valuable not only for AIMC accelerators but also for conventional digital inference platforms.

Enhancing Inference Through Compute Scaling

The team explored test-time compute scaling on the MATH-500 benchmark by generating multiple candidate answers per query and selecting the optimal one using a reward model. AFMs demonstrated superior scaling behavior relative to QAT models, with accuracy differences narrowing as more inference compute was allocated. This aligns with AIMC’s strengths in delivering low-power, high-throughput inference rather than training.

Implications for the Future of Analog In-Memory Computing

This research marks the first comprehensive demonstration that large-scale LLMs can be adapted to AIMC hardware without suffering catastrophic accuracy degradation. Although training AFMs demands significant computational resources and some reasoning benchmarks like GSM8K still reveal performance gaps, these findings represent a pivotal advancement. The synergy of energy efficiency, noise resilience, and compatibility with digital hardware positions AFMs as a promising avenue for scaling foundation models beyond the constraints of traditional GPU-based systems.

Conclusion: Paving the Way for Energy-Efficient Edge AI

The advent of Analog Foundation Models signifies a major leap toward overcoming the limitations of digital accelerators in scaling LLMs. By equipping models to endure the unpredictable noise inherent in analog in-memory computing, this work transitions AIMC from a theoretical concept to a viable platform. Despite ongoing challenges in training costs and reasoning accuracy, AFMs chart a clear path toward deploying large-scale, energy-efficient AI models on compact hardware, bringing foundation models closer to real-world edge applications.