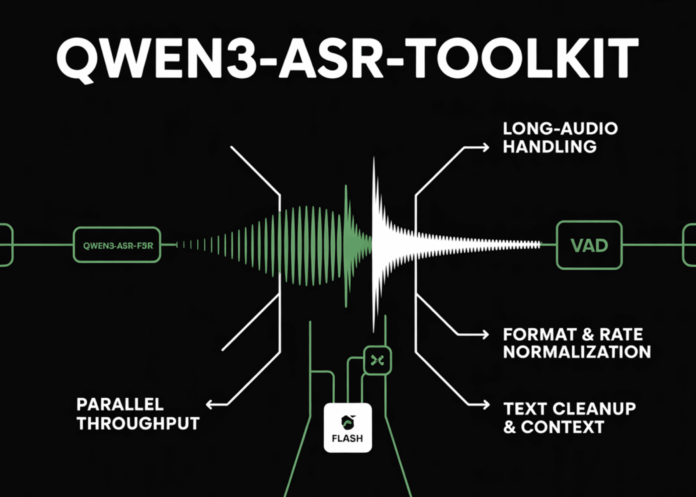

The Qwen3-ASR-Toolkit is an open-source Python command-line interface (CLI) released under the MIT license, designed to overcome the inherent limitations of the Qwen3-ASR-Flash API. This API restricts each transcription request to a maximum of 3 minutes or 10 MB of audio data. The toolkit intelligently segments audio using voice activity detection (VAD), executes multiple API calls in parallel, and automatically handles audio resampling and format normalization through FFmpeg. This combination enables robust, scalable transcription workflows capable of processing audio files lasting several hours, with customizable concurrency, context-aware transcription, and refined text output. The toolkit requires Python 3.8 or higher and can be installed via:

pip install qwen3-asr-toolkitEnhancements Beyond the Base API

- Extended Audio Support: By leveraging VAD, the toolkit divides lengthy audio inputs at natural speech pauses, ensuring each segment complies with the API’s strict duration and size limits. The transcriptions of these segments are then seamlessly concatenated to maintain the original sequence.

- Concurrent Processing: Utilizing a thread pool, multiple audio chunks are transcribed simultaneously via DashScope endpoints, significantly reducing total processing time for long recordings. Users can adjust the number of parallel threads using the

-j/--num-threadsoption. - Audio Format Standardization: The toolkit supports a wide range of audio and video formats-including MP4, MOV, MKV, MP3, WAV, and M4A-and converts them to the API’s required mono 16 kHz sample rate using FFmpeg, which must be installed and accessible in the system PATH.

- Advanced Text Refinement and Contextual Biasing: Post-processing routines minimize transcription errors such as repetitions and hallucinations. Additionally, users can inject domain-specific context to improve recognition accuracy for specialized vocabulary. The API also offers toggles for language detection and inverse text normalization (ITN).

The Qwen3-ASR-Flash API is optimized for short, interactive audio clips but is less suited for lengthy media files due to its strict per-request limits. This toolkit automates best practices-such as VAD-based segmentation and parallel API calls-allowing teams to efficiently transcribe large audio archives or continuous live streams without building complex orchestration systems from scratch.

Getting Started Quickly

- Install Required Dependencies

# Ensure FFmpeg is installed and accessible

# On macOS:

brew install ffmpeg

# On Ubuntu/Debian:

sudo apt update && sudo apt install -y ffmpeg

- Install the Toolkit

pip install qwen3-asr-toolkit- Set Up API Credentials

# Export your DashScope API key as an environment variable

export DASHSCOPE_API_KEY="sk-..."- Run the Transcription

# Basic usage with a local video file and default 4 threads

qwen3-asr -i "/path/to/lecture.mp4"

# Increase concurrency and specify API key explicitly (optional if env var is set)

qwen3-asr -i "/path/to/podcast.wav" -j 8 -key "sk-..."

# Enhance transcription accuracy with domain-specific context

qwen3-asr -i "/path/to/earnings_call.m4a" -c "company tickers, CFO name, product lines, Q3 revenue forecast"

Key command-line options include:

-i/--input-file (local file path or HTTP/HTTPS URL), -j/--num-threads (concurrency level), -c/--context (contextual keywords), -key/--dashscope-api-key (API key), -t/--tmp-dir (temporary directory), and -s/--silence (silence threshold). Transcription output is displayed on the console and saved as <input_basename>.txt.

Core Pipeline Workflow

- Load audio from a local file or URL.

- Apply voice activity detection to identify silent segments.

- Split audio into chunks that respect API duration and size limits.

- Resample audio to mono 16 kHz format using FFmpeg.

- Submit chunks concurrently to DashScope transcription endpoints.

- Reassemble transcribed segments in the correct order.

- Perform text post-processing to remove duplicates and reduce errors.

- Output the final transcript as a text file.

Conclusion

The Qwen3-ASR-Toolkit transforms the Qwen3-ASR-Flash API into a scalable, production-ready transcription solution for long-form audio. By integrating VAD-based segmentation, FFmpeg-driven audio normalization, and parallel API requests, it bypasses the API’s strict per-request limits while offering configurable throughput and enhanced transcription accuracy through context and language controls. For deployment, it is recommended to lock the package version, verify API endpoint regions and keys, and optimize thread count based on network conditions and query per second (QPS) requirements. Simply install with pip install qwen3-asr-toolkit and integrate into your transcription workflows.