In production environments, the success of intelligent agents hinges more on robust data infrastructure, governance, and observability than on the choice of the underlying model. The following overview breaks down the essential components of a document-to-chat system and explains their significance.

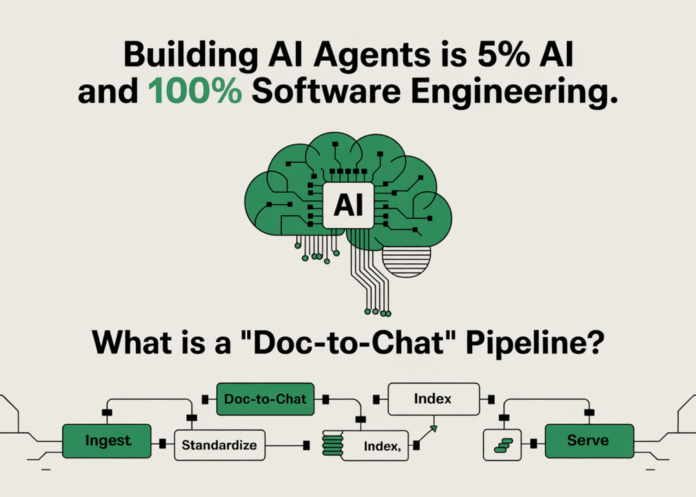

Understanding the Document-to-Chat Pipeline

A document-to-chat pipeline is a comprehensive framework that processes enterprise documents by ingesting, normalizing, and governing them. It indexes document embeddings alongside relational metadata and delivers retrieval and generation services through secure APIs, often incorporating human-in-the-loop (HITL) checkpoints. This architecture underpins advanced question-answering agents, digital assistants, and automated workflows that require strict adherence to access controls and auditability. Most real-world implementations are enhanced versions of retrieval-augmented generation (RAG), fortified with large language model (LLM) safety measures, governance protocols, and observability tools like OpenTelemetry.

Seamless Integration with Existing Enterprise Systems

To ensure smooth interoperability, it’s crucial to adopt standard communication protocols such as REST/JSON or gRPC layered over trusted storage solutions already in use within your organization. For tabular data, Apache Iceberg offers vital features like ACID compliance, schema and partition evolution, and snapshot isolation, which are indispensable for consistent retrieval and backfill operations. When dealing with vector embeddings, systems that integrate with SQL filters are preferred. For example, pgvector embeds vectors alongside business keys and access control tags within PostgreSQL, enabling precise, policy-aware queries. Meanwhile, specialized vector databases like Milvus excel at handling high-throughput approximate nearest neighbor (ANN) searches with scalable, disaggregated storage and compute. Many enterprises adopt a hybrid approach, leveraging SQL+pgvector for transactional operations and Milvus for intensive retrieval workloads.

- Apache Iceberg: Provides ACID transactions, hidden partitioning, and snapshot isolation with broad vendor support across data warehouses.

- pgvector: Combines SQL querying with vector similarity search in a single query plan, facilitating accurate joins and enforcing access policies.

- Milvus: Features a layered, horizontally scalable architecture optimized for large-scale similarity searches.

Coordinating Agents, Humans, and Workflows on a Unified Knowledge Platform

In production, intelligent agents must incorporate explicit collaboration points where human reviewers can validate, correct, or escalate outputs. Managed HITL solutions like AWS A2I offer private workforce integration and customizable workflow definitions, serving as a blueprint for gating uncertain model responses. Frameworks such as LangGraph embed these human approval steps directly into agent workflows, treating them as integral nodes within the directed acyclic graph (DAG) rather than as external callbacks. This approach is essential for controlling critical actions like publishing summaries, generating support tickets, or committing code changes.

Typical workflow pattern: LLM output → confidence and guardrail validation → HITL approval → downstream side effects. Every artifact-including prompts, retrieved documents, and decisions-should be logged for auditability and reproducibility.

Ensuring Reliability Before Model Interaction

Reliability must be enforced through multiple defensive layers:

- Content and language guardrails: Inputs and outputs are pre-validated to comply with safety and policy requirements. Options range from managed services like Bedrock Guardrails to open-source tools such as NeMo Guardrails and Llama Guard, each with distinct trade-offs documented in independent analyses.

- PII detection and redaction: Sensitive information is identified and masked both in source documents and model interactions. Tools like Microsoft Presidio provide robust recognizers and masking capabilities but should be combined with additional safeguards.

- Access control and data lineage: Enforce fine-grained row- and column-level access controls and maintain audit trails across data catalogs (e.g., Unity Catalog) to ensure retrieval respects permissions. Unifying lineage and access policies across environments is critical.

- Retrieval quality assurance: Use reference-free evaluation metrics such as faithfulness and context precision/recall (via tools like Ragas) to monitor RAG performance, blocking or deprioritizing low-quality contexts.

Scaling Indexing and Retrieval for High-Demand Environments

Two primary factors influence scalability: data ingestion throughput and query concurrency.

- Data ingestion: Normalize data at the lakehouse boundary and write to Iceberg tables to enable versioned snapshots. Embeddings are generated asynchronously, allowing deterministic re-indexing and point-in-time consistency.

- Vector search serving: Milvus’s architecture separates storage and compute, supporting horizontal scaling and fault isolation. Hybrid indexing methods like HNSW, IVF, and Flat, combined with replica sets, help balance recall and latency.

- SQL and vector integration: Perform business logic joins server-side using pgvector, for example:

WHERE tenant_id = ? AND acl_tag @> ... ORDER BY embedding <-> :query LIMIT k. This reduces network overhead and enforces access policies. - Chunking and embedding optimization: Properly segment documents with tuned chunk sizes, overlaps, and semantic boundaries. Poor chunking strategies are a common cause of retrieval failures.

For mixed structured and unstructured data, hybrid retrieval techniques combining BM25, ANN, and reranking are recommended. Storing structured metadata alongside vectors enables efficient filtering and ranking during queries.

Advanced Monitoring Beyond Traditional Logging

Effective observability requires integrating distributed tracing, metrics, and continuous evaluation:

- Distributed tracing: Generate OpenTelemetry spans throughout ingestion, retrieval, model inference, and auxiliary tools. Platforms like LangSmith natively consume OTEL traces and integrate with external APMs such as Jaeger, Datadog, and Elastic, providing comprehensive insights into latency, prompt content, context, and cost per request.

- LLM observability platforms: Evaluate solutions like LangSmith, Arize Phoenix, LangFuse, and Datadog based on their tracing capabilities, evaluation tools, cost monitoring, and enterprise readiness. Independent reviews and comparison matrices are available to guide selection.

- Continuous evaluation: Automate RAG performance assessments using frameworks like Ragas, DeepEval, or MLflow on canary datasets and live traffic replays to detect drift in faithfulness and grounding over time.

Ingest-time schema profiling and mapping are essential to track changes in data structure-such as new document templates or table schema evolution-and to diagnose retrieval regressions caused by upstream data shifts.

Illustrative Example: Document-to-Chat Workflow with Signals and Controls

- Data ingestion: Connectors extract text → normalize content → write to Iceberg tables with ACID guarantees and snapshot support.

- Governance: Scan for PII using tools like Presidio → redact or mask sensitive data → register documents in catalogs with access control policies.

- Indexing: Generate embeddings → store in pgvector for policy-aware joins and Milvus for high-throughput ANN search.

- Serving: Expose APIs via REST/gRPC → perform hybrid retrieval → apply guardrails → invoke LLMs and auxiliary tools.

- Human-in-the-loop: Route low-confidence outputs to HITL platforms like AWS A2I or LangGraph for approval.

- Observability: Collect OpenTelemetry traces → ingest into LangSmith or APMs → run scheduled RAG evaluations.

Why “5% AI, 100% Software Engineering” Reflects Reality

Failures and trust issues in agent systems rarely stem from the underlying model’s performance. Instead, they are predominantly caused by challenges in data quality, permission enforcement, retrieval degradation, or insufficient telemetry. The foundational elements-ACID-compliant tables, access control catalogs, PII guardrails, hybrid retrieval strategies, comprehensive tracing, and human oversight-are what ensure that a given base model operates safely, efficiently, and reliably for end users. Prioritize these engineering controls before considering model swaps.