Voice-based artificial intelligence is rapidly emerging as a pivotal domain within multimodal AI, revolutionizing how machines interpret and interact with human audio inputs. From smart assistants to conversational agents, the capacity to comprehend and reason through sound is transforming human-computer interaction. Despite significant advancements in model capabilities, the evaluation methodologies have lagged behind, often fragmented, inefficient, and narrowly tailored, which complicates the fair comparison and realistic testing of these models in dynamic, multi-turn conversational scenarios.

Introducing AU-Harness: A Comprehensive Audio Model Evaluation Toolkit

To bridge this critical evaluation gap, researchers from UT Austin and ServiceNow have developed AU-Harness, an open-source framework designed to rigorously assess Large Audio Language Models (LALMs) at scale. AU-Harness offers a unified, fast, and extensible platform that supports a broad spectrum of audio-related tasks-from basic speech transcription to intricate audio-based reasoning-enabling researchers to benchmark models efficiently within a single cohesive environment.

Limitations of Existing Audio Evaluation Frameworks

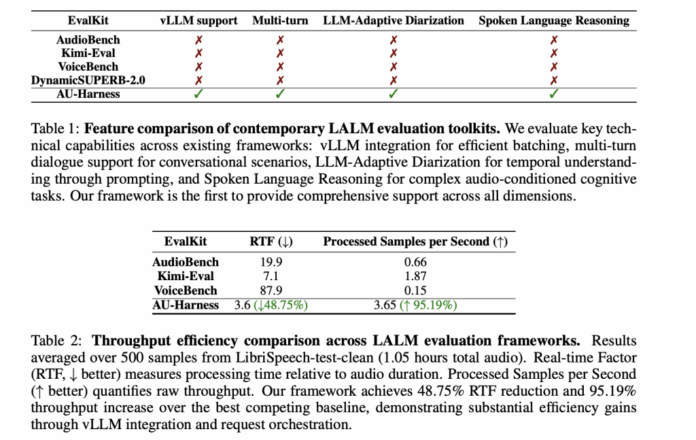

Traditional audio benchmarks have predominantly concentrated on isolated tasks such as speech-to-text conversion or emotion detection. While initiatives like AudioBench, VoiceBench, and DynamicSUPERB-2.0 expanded the scope, they still fall short in several key areas:

- Performance Bottlenecks: Many current toolkits lack support for batching and parallel processing, resulting in slow, resource-intensive evaluations that hinder large-scale experimentation.

- Inconsistent Prompting: Variability in how prompts are structured across models leads to unreliable comparisons and ambiguous benchmarking outcomes.

- Limited Task Diversity: Essential capabilities such as speaker diarization (identifying who spoke when) and spoken language reasoning (executing instructions conveyed via audio) are often overlooked.

These shortcomings restrict the advancement of LALMs, especially as they evolve into sophisticated multimodal agents capable of managing extended, context-rich dialogues.

Enhancing Evaluation Speed and Scalability with AU-Harness

AU-Harness is engineered for high efficiency. By leveraging the vLLM inference engine, it employs a token-based scheduling system that orchestrates simultaneous evaluation requests across distributed computing nodes. Additionally, it partitions datasets intelligently to balance workloads evenly across available hardware.

This architecture enables near-linear scalability, maximizing resource utilization. Benchmark tests demonstrate that AU-Harness achieves a 127% increase in throughput and cuts the real-time factor (RTF) by nearly 60% compared to existing evaluation suites. Consequently, tasks that previously required days can now be completed within hours, accelerating research cycles.

Customizable and Flexible Evaluation Capabilities

AU-Harness prioritizes adaptability without sacrificing standardization. It allows individual models to be evaluated with distinct hyperparameters-such as temperature settings or maximum token limits-while maintaining consistent benchmarking protocols. Researchers can also apply dataset filters based on criteria like accent, audio duration, or background noise, facilitating targeted performance analysis.

Crucially, AU-Harness supports multi-turn dialogue assessments, a feature absent in many prior frameworks. This enables evaluation of conversational continuity, contextual understanding, and adaptive reasoning over extended interactions, reflecting the operational realities of modern voice assistants.

Extensive Task Coverage Across Diverse Audio Domains

AU-Harness encompasses an impressive range of evaluation tasks, integrating over 50 datasets with more than 380 subsets and covering 21 distinct tasks grouped into six major categories:

- Speech Recognition: Including standard ASR, long-form transcription, and code-switching scenarios.

- Paralinguistics: Tasks such as emotion detection, accent identification, gender classification, and speaker verification.

- Audio Scene Understanding: Comprehension of environmental sounds and musical elements.

- Spoken Language Understanding: Encompassing question answering, translation, and dialogue summarization.

- Spoken Language Reasoning: Evaluating capabilities like speech-to-code generation, function invocation, and multi-step instruction execution.

- Safety and Security: Assessing robustness against adversarial inputs and spoofing attacks.

Two standout innovations include:

- LLM-Adaptive Diarization: A novel approach that leverages prompting techniques to perform speaker diarization without relying on specialized neural networks.

- Spoken Language Reasoning: A challenging benchmark that tests a model’s ability to interpret and act upon spoken instructions, moving beyond mere transcription.

Insights from Benchmarking Leading Audio Language Models

When evaluated using AU-Harness, prominent models such as GPT-4o, Qwen2.5-Omni, and Voxtral-Mini-3B reveal a nuanced performance landscape. These systems demonstrate strong proficiency in automatic speech recognition and spoken question answering, achieving high accuracy rates.

However, they exhibit notable weaknesses in temporal reasoning tasks like diarization and in following complex, multi-step spoken instructions. A significant observation is the instruction modality gap: performance declines by up to 9.5 percentage points when tasks are presented as audio instructions rather than text, underscoring the ongoing challenge of adapting text-based reasoning skills to the audio domain.

Conclusion: Advancing Voice AI Evaluation with AU-Harness

AU-Harness represents a major leap forward in the standardized, scalable assessment of audio language models. By combining rapid evaluation speeds, reproducible methodologies, and comprehensive task coverage-including previously neglected areas like diarization and spoken reasoning-it fills critical voids in current benchmarking practices. Its open-source availability and public leaderboard foster a collaborative environment, empowering the AI community to refine, compare, and push the limits of voice-first intelligent systems.