Google AI Research in collaboration with DeepMind has unveiled VaultGemma 1B, marking the largest open-weight large language model (LLM) trained exclusively using differential privacy (DP) techniques. This breakthrough represents a significant advancement in developing AI systems that balance high performance with stringent privacy safeguards.

The Importance of Differential Privacy in Large Language Models

LLMs trained on extensive datasets sourced from the internet are vulnerable to memorization attacks, where sensitive or personally identifiable information (PII) embedded in the training data can be inadvertently exposed. Research has demonstrated that models without privacy constraints may reproduce exact fragments of their training data, posing serious privacy risks, especially when models are openly accessible.

Differential Privacy provides a provable mathematical framework that limits the influence of any individual data point on the model’s outputs. Unlike methods that apply DP only during fine-tuning phases, VaultGemma integrates DP throughout the entire pretraining process, ensuring privacy protection is embedded from the ground up.

VaultGemma’s Model Design and Innovations

VaultGemma’s architecture builds upon the foundation of previous Gemma models but is specifically tailored for private training environments.

- Parameter count: 1 billion parameters spread across 26 layers.

- Architecture: Decoder-only transformer.

- Activation function: GeGLU with a feedforward dimension of 13,824.

- Attention mechanism: Multi-Query Attention (MQA) supporting a global context window of 1024 tokens.

- Normalization: RMSNorm applied in a pre-normalization setup.

- Tokenizer: SentencePiece with a vocabulary size of 256,000 tokens.

One key modification is the shortened sequence length to 1024 tokens, which reduces computational overhead and allows for larger batch sizes under the constraints imposed by differential privacy.

Training Dataset and Data Hygiene

VaultGemma was trained on a massive corpus of approximately 13 trillion tokens, the same dataset used for Gemma 2. This dataset primarily consists of English-language web documents, programming code, and scientific literature.

To ensure ethical standards and data quality, the dataset underwent rigorous filtering to:

- Eliminate unsafe or harmful content.

- Minimize the presence of personal or sensitive information.

- Prevent overlap with evaluation benchmarks to maintain fair testing conditions.

These measures help maintain both the safety and integrity of the model’s outputs.

Implementing Differential Privacy in VaultGemma

The training process employed DP-SGD (Differentially Private Stochastic Gradient Descent), which incorporates gradient clipping and the addition of Gaussian noise to gradients. The implementation leveraged the JAX Privacy framework and introduced several optimizations to enhance scalability:

- Vectorized per-example gradient clipping to improve parallel processing efficiency.

- Gradient accumulation techniques to simulate very large batch sizes.

- Truncated Poisson Subsampling integrated directly into the data pipeline for efficient, on-the-fly data sampling.

These innovations enabled VaultGemma to achieve a formal differential privacy guarantee of (ε ≤ 2.0, δ ≤ 1.1×10⁻¹⁰) at the sequence level (1024 tokens), representing a strong privacy assurance.

Scaling Laws Tailored for Private Model Training

Training large-scale models under DP constraints requires novel scaling approaches. The VaultGemma team developed DP-specific scaling laws incorporating three key advancements:

- Quadratic modeling of optimal learning rates based on extensive training runs.

- Parametric extrapolation of loss trajectories to reduce dependency on frequent intermediate checkpoints.

- Semi-parametric fitting techniques to generalize predictions across varying model sizes, training durations, and noise-to-batch ratios.

This framework allowed for accurate forecasting of training loss and efficient utilization of the TPUv6e cluster resources.

Training Setup and Performance Metrics

VaultGemma was trained on a massive infrastructure of 2048 TPUv6e accelerators using GSPMD partitioning and MegaScale XLA compilation techniques.

- Effective batch size: Approximately 518,000 tokens per iteration.

- Total training steps: 100,000 iterations.

- Noise multiplier: 0.614, balancing privacy and utility.

The final training loss closely matched predictions from the DP scaling laws, deviating by less than 1%, validating the robustness of the training methodology.

Comparative Evaluation: VaultGemma vs. Non-Private Models

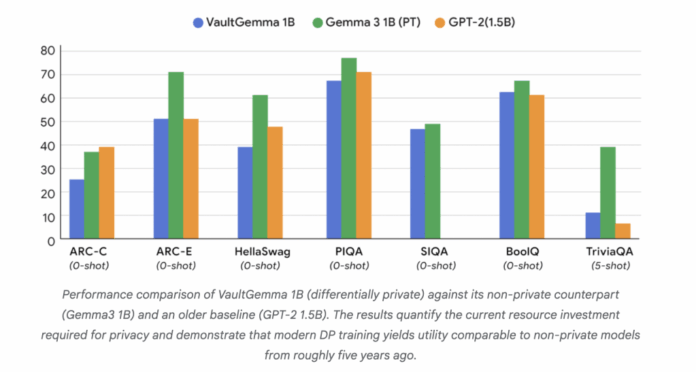

While VaultGemma’s performance on standard academic benchmarks lags behind non-private models, it demonstrates substantial practical utility:

- ARC-Challenge: VaultGemma scored 26.45 compared to 38.31 by Gemma-3 1B.

- PIQA: Achieved 68.0 versus 70.51 by GPT-2 1.5B.

- TriviaQA (5-shot): VaultGemma reached 11.24, while Gemma-3 1B scored 39.75.

These results indicate that DP-trained models currently perform on par with non-private models from roughly five years ago. Crucially, VaultGemma passed rigorous memorization tests confirming no detectable leakage of training data, a significant privacy milestone compared to earlier Gemma models.

Conclusion: Pioneering Privacy-First Large Language Models

VaultGemma 1B demonstrates that it is feasible to train large-scale language models with strong differential privacy guarantees without rendering them impractical for real-world use. Although there remains a gap in utility compared to non-private counterparts, the open release of VaultGemma and its training framework lays a solid foundation for future research in privacy-preserving AI. This initiative marks a pivotal shift toward creating AI systems that are not only capable and efficient but also inherently secure, transparent, and respectful of user privacy.