Building a Robust Neural Agent: Integrating Classical Techniques with Modern Stability Enhancements

This guide delves into the creation of a sophisticated neural agent that merges foundational neural network methodologies with contemporary strategies to ensure training stability and reliable performance. Our approach incorporates Xavier initialization to maintain balanced gradient propagation, alongside stable activation functions such as leaky ReLU, sigmoid, and tanh with clipping mechanisms to prevent numerical overflow. To further enhance training robustness, we implement gradient clipping, momentum-based parameter updates, and weight decay regularization.

Training Strategy for Stability and Efficiency

The training process is designed with mini-batch gradient descent, early stopping criteria, adaptive learning rate adjustments, and automatic resets triggered by instability detection. This comprehensive setup equips the model to handle complex and diverse datasets effectively. Target normalization is applied to improve convergence, and performance is evaluated using metrics like Mean Squared Error (MSE), Mean Absolute Error (MAE), and the coefficient of determination (R²).

Extending the Agent with Experience Replay and Exploration

To emulate reinforcement learning behaviors, the agent incorporates experience replay buffers and exploratory decision-making mechanisms. This flexibility allows it to tackle a variety of tasks, including regression, classification-to-regression transformations, and reinforcement learning-style problems.

Core Libraries and Environment Setup

import numpy as np

import matplotlib.pyplot as plt

from sklearn.datasets import make_classification, make_regression

from sklearn.preprocessing import StandardScaler

from sklearn.model_selection import train_test_split

import warnings

warnings.filterwarnings('ignore')We begin by importing essential Python libraries such as NumPy for numerical operations, Matplotlib for visualization, and scikit-learn for dataset generation, preprocessing, and splitting. Warnings are suppressed to maintain a clean output during execution.

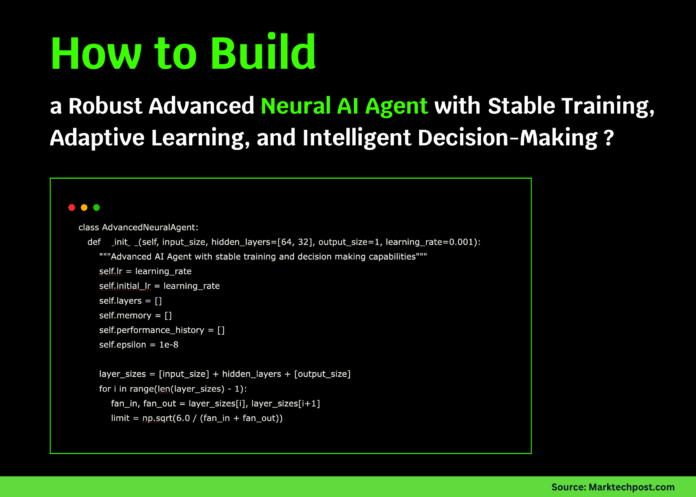

AdvancedNeuralAgent Class: Architecture and Functionalities

The AdvancedNeuralAgent class encapsulates the neural network model with features aimed at stable training and intelligent decision-making.

Initialization with Xavier Initialization and Momentum Buffers

The network layers are initialized using Xavier uniform distribution to ensure balanced weight scales. Momentum buffers for weights and biases are set up to accelerate convergence and smooth updates.

Activation Functions with Clipping for Numerical Stability

Multiple activation functions are supported, including leaky ReLU, sigmoid, tanh, and linear, all with input clipping to avoid overflow issues during forward and backward passes.

Forward and Backward Propagation with Gradient Clipping

The forward pass computes activations layer-by-layer, applying clipping to intermediate values. The backward pass implements gradient clipping to prevent exploding gradients, combined with momentum and weight decay to stabilize updates.

Adaptive Learning Rate and Early Stopping

The learning rate dynamically adjusts based on recent validation performance, decreasing when progress stalls and increasing when improvements are significant. Early stopping halts training if no improvement is observed over a patience window, preventing overfitting.

Experience Replay and Decision Making

The agent stores past experiences to enable replay, enhancing learning stability. Decision-making incorporates an exploration rate, adding controlled noise to predictions to balance exploitation and exploration.

Automatic Reset on Instability

If training metrics indicate instability, the network parameters are reinitialized to recover from divergence, ensuring continued learning without manual intervention.

Training and Evaluation Methods

The training method normalizes targets, splits data into training and validation sets, and iteratively updates the model while tracking losses. Evaluation computes MSE, MAE, and R² to provide a comprehensive performance overview. Visualization utilities plot training and validation losses, performance history, and learning rate schedules for insightful monitoring.

Demonstration Suite: Testing the Agent Across Diverse Scenarios

The AIAgentDemo class orchestrates a series of experiments to validate the agent’s capabilities on various datasets and configurations.

Dataset Generation

- Simple Regression: 600 samples, 5 features, low noise.

- Complex Regression: 800 samples, 10 features, higher noise.

- Classification-to-Regression: 700 samples, 8 features, binary classes converted to continuous targets with noise.

Configuration Testing

Multiple network architectures are evaluated, including lightweight, standard, deep, and wide models, each with tailored hidden layer sizes and learning rates. Each configuration is trained and assessed, with results logged for comparison.

Comprehensive Demo Execution

The demo runs all dataset-configuration combinations, reporting success rates and highlighting top performers based on R² scores. It also showcases the agent’s intelligence by examining memory capacity, decision-making under exploration vs. exploitation, learning progress, and network complexity.

Quick Demo: Fast Validation on a Simple Regression Task

A streamlined demo trains the agent on a regression dataset with six features using a compact two-layer network. After 100 epochs, it evaluates performance and visualizes training dynamics, providing immediate feedback on model behavior.

Main Execution Flow

The script’s entry point offers options to run either the quick or comprehensive demo. By default, the comprehensive demo is executed, training multiple agents across datasets and configurations, then summarizing the best results.

Summary and Insights

This implementation highlights the importance of stability-focused design choices such as weight decay, gradient clipping, and adaptive learning rates in achieving consistent and reliable neural network training. The agent’s ability to adapt through experience replay and exploratory actions makes it versatile across various machine learning tasks. Comprehensive testing across diverse datasets and architectures confirms measurable improvements in key metrics like R², MSE, and MAE. Visualization tools further aid in understanding training progression and convergence patterns, making this agent a robust foundation for advanced AI applications.