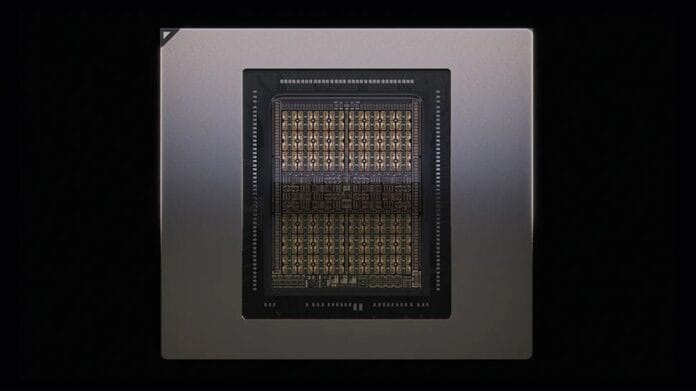

Introducing Nvidia’s Rubin CPX GPU: A Leap Forward in Enterprise AI Processing

Nvidia has unveiled its latest GPU innovation, the Rubin CPX, engineered specifically to tackle extensive AI workloads that require long-context processing. This new addition to Nvidia’s lineup marks a significant milestone with its unprecedented 128GB of GDDR7 memory, setting a new standard for memory capacity in GPUs designed for enterprise applications.

Rubin CPX: A Specialized Compute Powerhouse

Contrary to circulating rumors about a 128GB RTX gaming card, the Rubin CPX is not intended for gaming but is a high-performance compute engine optimized for AI inference tasks. Its primary use cases include software development, scientific research, and generating ultra-high-definition video content, where handling large data contexts efficiently is critical.

Vera Rubin NVL144 CPX Rack: Massive Scale AI Computing

The Rubin CPX GPU powers the Vera Rubin NVL144 CPX rack, a formidable AI compute system that integrates 144 Rubin CPX GPUs alongside 144 Rubin GPUs and 36 Vera CPUs. This configuration delivers an astounding 8 exaFLOPs of NVFP4 compute performance, supported by 100TB of ultra-fast memory and a staggering 1.7 petabytes per second memory bandwidth, enabling unprecedented throughput for AI workloads.

Equipped with four NVENC and four NVDEC units, the system accelerates video encoding and decoding processes, streamlining multimedia workflows. Additionally, Nvidia’s hardware attention acceleration technology boosts performance by three times compared to the previous GB300 NVL72 model, enhancing the efficiency of attention-based AI models.

Disaggregated Inference Architecture for Optimized AI Workloads

Nvidia’s approach with Rubin CPX focuses on disaggregated inference, where the GPU handles the compute-intensive context phase of AI processing, while other Rubin GPUs and Vera CPUs manage the generation phase. This division of labor not only maximizes throughput but also reduces the operational costs associated with deploying high-value inference models.

Behind the scenes, Nvidia’s Dynamo software orchestrates low-latency cache transfers and efficient routing between components, ensuring seamless communication and optimal resource utilization across the system.

Connectivity and Future Roadmap

Connectivity within the NVL144 CPX rack is facilitated by Quantum-X800 InfiniBand or Spectrum-X Ethernet networks, enhanced with ConnectX-9 SuperNICs, providing the high-speed data transfer necessary for large-scale AI computations.

Following a recent tape-out at TSMC, Nvidia plans to begin shipping Rubin CPX GPUs and NVL144 CPX racks by late 2026. Looking ahead, the company’s roadmap includes the Rubin Ultra, expected in 2027, and the Feynman architecture slated for 2028. These future iterations promise higher-density modules, integration of HBM4E memory, and accelerated networking capabilities, further pushing the boundaries of AI hardware performance.

Expanding the AI Hardware Landscape

As AI workloads grow increasingly complex, Nvidia’s Rubin CPX and Vera Rubin rack represent a strategic leap in addressing the demands of enterprise AI. Comparable advancements are also underway in the industry, such as AMD’s development of the 432GB MI400 GPU, highlighting a competitive push toward larger memory capacities and enhanced compute power in AI accelerators.

Related Insights

- AMD’s 432GB MI400 GPU: A Rival to Nvidia’s Vera Rubin

- The Next Generation of Rubin AI Chips: Ushering in a New AI Era

- Megawatt-Class AI Server Racks: The Future of Data Center Infrastructure by 2030