Comprehensive NLP Pipeline Using Gensim and Python Libraries in Google Colab

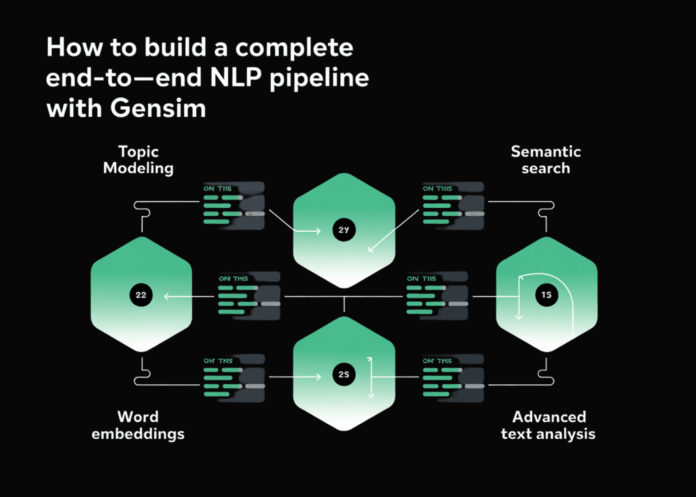

This guide walks you through a fully integrated Natural Language Processing (NLP) workflow implemented with Gensim and other essential Python libraries, optimized for execution in Google Colab. The pipeline encompasses key NLP methodologies such as text preprocessing, topic extraction via Latent Dirichlet Allocation (LDA), semantic word embeddings with Word2Vec, document similarity through TF-IDF, and semantic search capabilities. Beyond model training and evaluation, it includes insightful visualizations, advanced topic analytics, and document classification examples. By blending statistical techniques with machine learning models, this framework offers a robust foundation for large-scale text data exploration and experimentation.

Setting Up the Environment and Dependencies

To ensure smooth operation, we begin by installing and updating critical libraries including SciPy, Gensim, NLTK, and visualization tools like Matplotlib and Seaborn. These packages provide the backbone for data manipulation, model building, and graphical representation. Additionally, we download necessary NLTK datasets such as tokenizers and stopword lists to facilitate efficient text preprocessing.

!pip install --upgrade scipy==1.11.4

!pip install gensim==4.3.2 nltk wordcloud matplotlib seaborn pandas numpy scikit-learn

!pip install --upgrade setuptools

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sns

from wordcloud import WordCloud

import warnings

warnings.filterwarnings('ignore')

from gensim import corpora, models, similarities

from gensim.models import Word2Vec, LdaModel, TfidfModel, CoherenceModel

from gensim.parsing.preprocessing import preprocess_string, strip_tags, strip_punctuation, strip_multiple_whitespaces, strip_numeric, remove_stopwords, strip_short

import nltk

nltk.download('punkt', quiet=True)

nltk.download('stopwords', quiet=True)

from nltk.corpus import stopwords

from nltk.tokenize import word_tokenize

Modular NLP Pipeline Class for End-to-End Text Analysis

We encapsulate the entire NLP process within a class named AdvancedGensimPipeline, which streamlines each phase from raw text ingestion to model training and evaluation. This modular design promotes reusability and clarity.

Corpus Creation and Text Preprocessing

The pipeline starts by generating a diverse sample corpus covering topics like data science, cloud computing, cybersecurity, and machine learning. Text preprocessing applies a series of filters to clean the data-removing HTML tags, punctuation, numbers, stopwords, and short tokens-while converting all text to lowercase. This ensures the input is normalized for downstream modeling.

Building Dictionary and Corpus Representations

Next, a Gensim dictionary is constructed from the processed documents, filtering out extremely rare and overly common terms to improve model quality. The corpus is then represented as a bag-of-words (BoW) format, which serves as input for topic modeling and similarity computations.

Training Word Embeddings with Word2Vec

Word2Vec is trained on the cleaned corpus to generate dense vector representations of words, capturing semantic relationships. The model uses a vector size of 100, a context window of 5, and runs for 50 epochs to ensure robust embeddings.

Exploring Word Similarities and Analogies

Using the trained Word2Vec model, the pipeline demonstrates similarity queries for terms like “machine,” “data,” and “learning,” revealing closely related words. It also attempts analogy reasoning, such as “computer” + “data” – “machine,” to showcase the model’s semantic understanding.

Latent Dirichlet Allocation (LDA) for Topic Modeling

The LDA model is trained to uncover latent topics within the corpus. We specify five topics by default, with parameters tuned for stability and interpretability. The pipeline evaluates topic coherence using the widely accepted c_v metric, providing a quantitative measure of topic quality.

Visualizing Topics and Document Distributions

To make the results more interpretable, the pipeline generates heatmaps illustrating document-topic distributions and word clouds highlighting the most significant terms per topic. These visualizations help users intuitively grasp the thematic structure of the dataset.

TF-IDF Model and Document Similarity

A TF-IDF model is created to quantify the importance of words in documents relative to the corpus. Using this, a similarity index is built to identify documents closely related to a given query document, facilitating efficient information retrieval.

Advanced Topic Analysis and Document Classification

The pipeline performs an in-depth analysis of dominant topics across documents, visualizing their prevalence. It also includes a classification demo where a new document is processed and assigned topic probabilities, alongside identifying the most similar existing document based on TF-IDF similarity.

Complete Pipeline Execution

The run_complete_pipeline method orchestrates all steps sequentially, from corpus creation to model training, evaluation, visualization, and classification. This method returns key outputs such as coherence scores, topic distributions, and trained models for further use.

Evaluating Topic Models Across Different Topic Counts

To determine the optimal number of topics, the compare_topic_models function trains multiple LDA models with varying topic counts (e.g., 3, 5, 7, 10). It computes coherence and perplexity scores for each, plotting these metrics to aid in selecting the best model configuration balancing interpretability and fit.

Implementing Semantic Search for Document Retrieval

The semantic_search_engine function enables querying the corpus semantically. It preprocesses the input query, converts it into TF-IDF space, and ranks documents by similarity scores. The top matching documents are displayed with brief content previews, demonstrating practical use in information retrieval scenarios.

Bringing It All Together: Main Execution Block

The main script initializes the pipeline, runs the full workflow, compares topic models, and performs a semantic search with a query related to artificial intelligence and deep learning. It concludes by printing summary statistics such as coherence score, vocabulary size, and embedding dimensions, confirming readiness for deployment or further experimentation.

Summary

This comprehensive NLP framework offers a versatile and extensible solution for text analysis tasks. By integrating preprocessing, topic modeling, word embeddings, similarity analysis, and semantic search, it provides a powerful toolkit for researchers, data scientists, and developers. The inclusion of detailed visualizations and evaluation metrics ensures that insights are both actionable and interpretable. Adaptable to various datasets and domains, this pipeline serves as a solid foundation for advanced NLP projects and production-grade text analytics.