Optimizing Large Language Model Deployment for Diverse Customer Queries

Large language models (LLMs) have revolutionized the field of natural language processing, enabling sophisticated interactions across various applications. However, deploying these models effectively presents a complex challenge due to their differing capabilities and associated costs. For instance, a customer support chatbot must efficiently manage a wide spectrum of inquiries. Straightforward questions like “What are your store hours?” can be addressed accurately by smaller, budget-friendly models. Conversely, intricate requests-such as in-depth product comparisons that require advanced reasoning and strategic planning-necessitate the use of more powerful, yet costly, LLMs.

Balancing Efficiency and Expense in Query Handling

This example highlights a core dilemma: how to strike an optimal balance between performance quality and cost efficiency when dealing with queries of varying complexity. The goal is to allocate resources intelligently, ensuring that simpler tasks do not consume excessive computational power, while more demanding questions receive the necessary attention from high-capacity models.

Limitations of Current LLM Routing Strategies

Most existing solutions approach the problem of routing queries to appropriate LLMs through supervised learning frameworks. These methods rely on having comprehensive datasets that map each query to its ideal model, assuming full knowledge of the best query-model pairings. However, this approach encounters two significant obstacles:

- Data Collection Costs: Generating labeled datasets requires running every query through multiple models to identify the optimal match, which is both time-consuming and expensive.

- Adaptability Issues: User queries evolve over time, and static supervised models struggle to adjust to these shifting patterns, leading to decreased effectiveness in real-world applications.

Challenges in Real-World Implementation

Traditional supervised learning techniques, dependent on exhaustive mappings between queries and models, prove impractical for dynamic environments where query types and distributions continuously change. Without the ability to adapt, these systems risk inefficiency and increased operational costs, undermining the benefits of deploying multiple LLMs.

Emerging Directions and Considerations

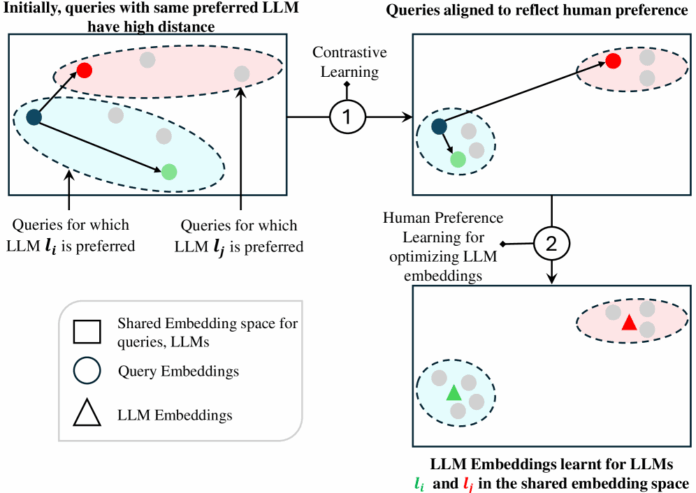

To address these challenges, research is increasingly focusing on adaptive routing mechanisms that leverage reinforcement learning or unsupervised methods to dynamically assign queries to the most suitable LLM. For example, recent studies demonstrate that hybrid approaches combining lightweight classifiers with feedback loops can reduce costs by up to 30% while maintaining high accuracy in customer service scenarios.

As LLM technology continues to advance, integrating scalable, cost-aware routing strategies will be essential for maximizing both user satisfaction and operational efficiency in applications ranging from virtual assistants to automated help desks.