Building a Hierarchical Reasoning Agent with a Lightweight Hugging Face Model

This guide demonstrates how to emulate the core principles of the Hierarchical Reasoning Model (HRM) by leveraging a freely available Hugging Face model that runs locally. We design a streamlined yet methodical reasoning agent that decomposes complex problems into manageable subgoals. By solving these subgoals using Python code, evaluating the results critically, and synthesizing a final response, we illustrate how hierarchical planning and execution can significantly boost reasoning capabilities. This approach showcases a brain-inspired workflow that operates efficiently without relying on massive models or costly APIs.

Setting Up the Environment and Loading the Model

First, we install essential libraries and load the Qwen2.5-1.5B-Instruct model from Hugging Face. To optimize performance, the data type is selected based on GPU availability, enabling efficient execution in environments like Google Colab.

!pip install -q -U transformers accelerate bitsandbytes rich

import torch

from transformers import AutoTokenizer, AutoModelForCausalLM, pipeline

MODEL_NAME = "Qwen/Qwen2.5-1.5B-Instruct"

DTYPE = torch.bfloat16 if torch.cuda.is_available() else torch.float32

Next, we initialize the tokenizer and model, configuring the model to run in 4-bit precision to reduce memory usage. Wrapping these components in a text-generation pipeline allows for straightforward interaction within Colab or similar environments.

tokenizer = AutoTokenizer.from_pretrained(MODEL_NAME, use_fast=True)

model = AutoModelForCausalLM.from_pretrained(

MODEL_NAME,

device_map="auto",

torch_dtype=DTYPE,

load_in_4bit=True

)

generator = pipeline(

"text-generation",

model=model,

tokenizer=tokenizer,

return_full_text=False

)

Core Utility Functions for Interaction and Execution

We define helper functions to facilitate communication with the model and to handle its outputs effectively. The chat function sends prompts with optional system instructions and controls sampling parameters, while extract_json reliably parses JSON data from the model’s responses, even when wrapped in code blocks or additional text.

import re, json

def chat(prompt: str, system: str = "", max_new_tokens: int = 512, temperature: float = 0.3) -> str:

messages = []

if system:

messages.append({"role": "system", "content": system})

messages.append({"role": "user", "content": prompt})

inputs = tokenizer.apply_chat_template(messages, tokenize=False, add_generation_prompt=True)

output = generator(inputs, max_new_tokens=max_new_tokens, do_sample=(temperature > 0), temperature=temperature, top_p=0.9)

return output[0]["generated_text"].strip()

def extract_json(text: str) -> dict:

match = re.search(r"{[sS]*}$", text.strip())

if not match:

match = re.search(r"{[sS]*?}", text)

try:

return json.loads(match.group(0)) if match else {}

except Exception:

cleaned = re.sub(r"^```.*?n|n```$", "", text, flags=re.S)

try:

return json.loads(cleaned)

except Exception:

return {}

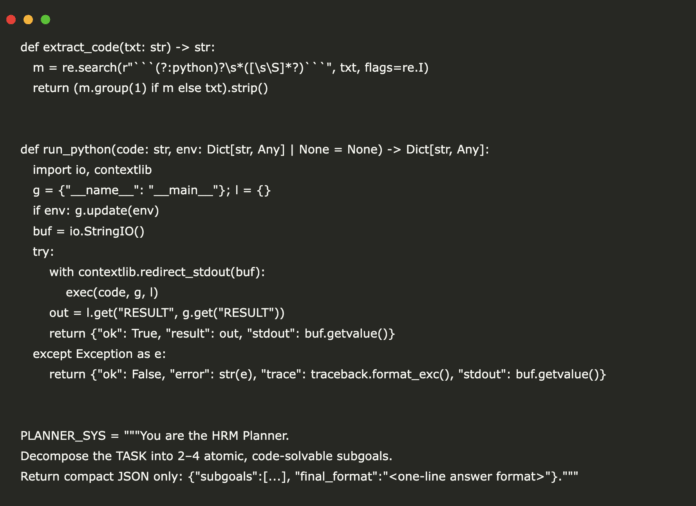

Extracting and Running Python Code Safely

To enable the agent to solve subgoals programmatically, we extract Python code snippets from the model’s output and execute them securely, capturing results and any errors.

import traceback, io, contextlib

def extract_code(text: str) -> str:

match = re.search(r"```(?:python)?s*([sS]*?)```", text, flags=re.I)

return match.group(1).strip() if match else text.strip()

def run_python(code: str, env: dict = None) -> dict:

global_env = {"__name__": "__main__"}

local_env = {}

if env:

global_env.update(env)

buffer = io.StringIO()

try:

with contextlib.redirect_stdout(buffer):

exec(code, global_env, local_env)

result = local_env.get("RESULT", global_env.get("RESULT"))

return {"ok": True, "result": result, "stdout": buffer.getvalue()}

except Exception as e:

return {"ok": False, "error": str(e), "trace": traceback.format_exc(), "stdout": buffer.getvalue()}

Defining Role-Based System Prompts for Hierarchical Reasoning

We establish four distinct roles to guide the model through the reasoning process:

- Planner: Breaks down the main task into 2-4 atomic, code-solvable subgoals, returning a concise JSON structure.

- Solver: Generates deterministic Python code snippets to solve each subgoal, assigning the answer to a variable named

RESULT. - Critic: Reviews subgoal outcomes and decides whether the final answer is ready or if revisions are necessary.

- Synthesizer: Produces the final answer in the specified format, omitting intermediate steps.

PLANNER_PROMPT = """

You are the HRM Planner.

Decompose the TASK into 2-4 atomic, code-solvable subgoals.

Return compact JSON only: {"subgoals":[...], "final_format":""}.

"""

SOLVER_PROMPT = """

You are the HRM Solver.

Given SUBGOAL and CONTEXT vars, output a single Python snippet.

Rules:

- Compute deterministically.

- Set a variable RESULT to the answer.

- Keep code short; stdlib only.

Return only a Python code block.

"""

CRITIC_PROMPT = """

You are the HRM Critic.

Given TASK and LOGS (subgoal results), decide if final answer is ready.

Return JSON only: {"action":"submit"|"revise","critique":"...", "fix_hint":""}.

"""

SYNTHESIZER_PROMPT = """

You are the HRM Synthesizer.

Given TASK, LOGS, and final_format, output only the final answer (no steps).

Follow final_format exactly.

"""

Implementing the Hierarchical Reasoning Workflow

The agent operates in iterative rounds, performing the following steps:

- Planning: Decompose the main task into subgoals.

- Solving: Generate and execute Python code for each subgoal, storing results.

- Critiquing: Evaluate subgoal results to determine if the final answer is acceptable.

- Refining: If necessary, adjust subgoals based on critique feedback.

- Synthesizing: Produce a clean final answer in the requested format.

def plan(task: str) -> dict:

prompt = f"TASK:n{task}nReturn JSON only."

return extract_json(chat(prompt, PLANNER_PROMPT, temperature=0.2, max_new_tokens=300))

def solve_subgoal(subgoal: str, context: dict) -> dict:

prompt = f"SUBGOAL:n{subgoal}nCONTEXT vars: {list(context.keys())}nReturn Python code only."

code = extract_code(chat(prompt, SOLVER_PROMPT, temperature=0.2, max_new_tokens=400))

result = run_python(code, env=context)

return {"subgoal": subgoal, "code": code, "run": result}

def critic(task: str, logs: list) -> dict:

summary = [{"subgoal": log["subgoal"], "result": log["run"].get("result"), "ok": log["run"]["ok"]} for log in logs]

prompt = f"TASK:n{task}nLOGS:n{json.dumps(summary, ensure_ascii=False, indent=2)}nReturn JSON only."

output = chat(prompt, CRITIC_PROMPT, temperature=0.1, max_new_tokens=250)

return extract_json(output)

def refine(task: str, logs: list) -> dict:

system = "Refine subgoals minimally to fix issues. Return same JSON schema as planner."

prompt = f"TASK:n{task}nLOGS:n{json.dumps(logs, ensure_ascii=False)}nReturn JSON only."

output = chat(prompt, system, temperature=0.2, max_new_tokens=250)

refined = extract_json(output)

return refined if refined.get("subgoals") else {}

def synthesize(task: str, logs: list, final_format: str) -> str:

packed = [{"subgoal": log["subgoal"], "result": log["run"].get("result")} for log in logs]

prompt = f"TASK:n{task}nLOGS:n{json.dumps(packed, ensure_ascii=False)}nfinal_format: {final_format}nOnly the final answer."

return chat(prompt, SYNTHESIZER_PROMPT, temperature=0.0, max_new_tokens=120).strip()

def hrm_agent(task: str, context: dict = None, budget: int = 2) -> dict:

ctx = dict(context or {})

trace = []

plan_json = plan(task)

for round_id in range(1, budget + 1):

logs = [solve_subgoal(sg, ctx) for sg in plan_json.get("subgoals", [])]

for log in logs:

ctx_key = f"g{len(trace)}_{abs(hash(log['subgoal'])) % 9999}"

ctx[ctx_key] = log["run"].get("result")

verdict = critic(task, logs)

trace.append({"round": round_id, "plan": plan_json, "logs": logs, "verdict": verdict})

if verdict.get("action") == "submit":

break

plan_json = refine(task, logs) or plan_json

final_answer = synthesize(task, trace[-1]["logs"], plan_json.get("final_format", "Answer: "))

return {"final": final_answer, "trace": trace}

Demonstration: Applying the HRM Agent to Sample Tasks

To validate the agent’s capabilities, we test it on two distinct problems:

- ARC-Style Transformation: The agent infers a transformation rule from training examples and applies it to a test input grid.

- Word Math Problem: The agent solves a quantitative reasoning problem involving volume loss and replenishment.

import textwrap

from rich import print as rprint

ARC_TASK = textwrap.dedent("""

Infer the transformation rule from train examples and apply to test.

Return exactly: "Answer: ", where is a Python list of lists of ints.

""").strip()

ARC_DATA = {

"train": [

{"inp": [[0, 0], [1, 0]], "out": [[1, 1], [0, 1]]},

{"inp": [[0, 1], [0, 0]], "out": [[1, 0], [1, 1]]}

],

"test": [[0, 0], [0, 1]]

}

result1 = hrm_agent(ARC_TASK, context={"TRAIN": ARC_DATA["train"], "TEST": ARC_DATA["test"]}, budget=2)

rprint("n[bold]Demo 1 - ARC-Style Transformation[/bold]")

rprint(result1["final"])

WM_TASK = "A tank contains 1200 liters. It leaks 2% of its volume every hour for 3 hours, then is refilled with 150 liters. Return exactly: 'Answer: '."

result2 = hrm_agent(WM_TASK, context={}, budget=2)

rprint("n[bold]Demo 2 - Word Math Problem[/bold]")

rprint(result2["final"])

rprint("n[dim]Number of reasoning rounds executed (Demo 1):[/dim]", len(result1["trace"]))

Final Thoughts: Unlocking Advanced Reasoning with Compact Models

This project highlights how hierarchical reasoning frameworks empower smaller, open-source models to perform complex cognitive tasks with remarkable accuracy. By integrating planning, solving, critiquing, and synthesizing stages, we enable a free Hugging Face model to tackle challenging problems without the need for large-scale infrastructure or expensive APIs.

Such brain-inspired architectures, combined with accessible tools, open new avenues for researchers and enthusiasts to experiment with reasoning benchmarks and develop innovative solutions. This hands-on exploration proves that sophisticated, multi-step reasoning workflows are within reach for anyone eager to learn and iterate.