Revolutionizing AI Memory: How Memory-R1 Empowers Large Language Models with Reinforcement Learning

Large language models (LLMs) have become the driving force behind numerous AI innovations, powering applications such as conversational agents, automated coding helpers, knowledge retrieval, and creative content generation. Despite their impressive capabilities, these models inherently lack persistent memory: each interaction is processed independently, without awareness of prior exchanges. Their limited context windows restrict the ability to maintain continuity over extended dialogues or multi-session tasks, often resulting in fragmented understanding and reasoning challenges when handling complex, evolving information.

Traditional approaches like retrieval-augmented generation (RAG) attempt to mitigate this by appending past data to prompts. However, this method frequently introduces excessive irrelevant information, overwhelming the model and diluting critical facts. To address these limitations, a collaborative research effort from the University of Munich, Technical University of Munich, University of Cambridge, and University of Hong Kong has developed Memory-R1, a novel framework that enables LLM agents to intelligently manage and leverage external memory through reinforcement learning.

Understanding the Memory Challenge in LLMs

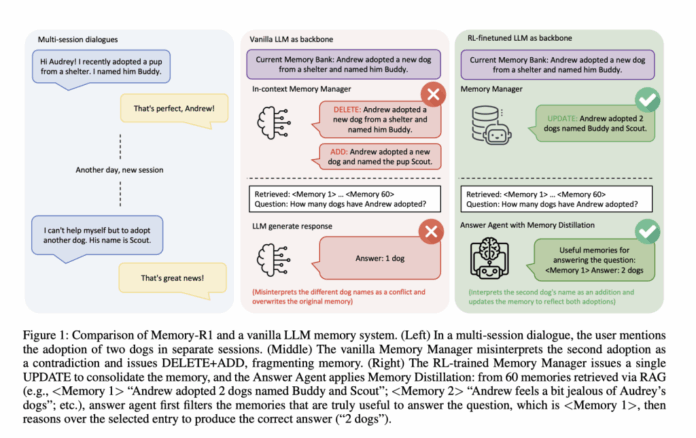

Consider a scenario where a user engages in multiple sessions with an AI assistant. In the initial session, the user states, “I adopted a dog named Max.” Later, they add, “I also adopted a cat named Whiskers.” A conventional memory system might mistakenly overwrite the first fact with the second or treat them as conflicting, leading to loss of valuable information. This inability to consolidate rather than replace knowledge results in incoherent and inconsistent user profiles over time.

While RAG systems retrieve relevant data, they lack mechanisms to filter out noise, causing the model to be distracted by irrelevant or outdated entries. In contrast, humans naturally retrieve a broad range of information but selectively focus on what is pertinent. Most existing AI memory architectures rely on fixed, rule-based heuristics to decide what to remember, which limits adaptability and learning from experience.

Introducing Memory-R1: A Dynamic Memory Management Framework

Memory-R1 innovates by incorporating two reinforcement learning (RL)-trained agents that collaboratively handle memory operations and answer generation:

- Memory Manager: After each user interaction, this agent evaluates the external memory and decides whether to add new facts, update existing entries, delete obsolete information, or take no action (NOOP), thereby maintaining a coherent and evolving knowledge base.

- Answer Agent: When responding to queries, this agent retrieves up to 60 candidate memories, then distills this set to the most relevant subset before reasoning and generating an accurate answer.

Both agents are fine-tuned using reinforcement learning algorithms such as Proximal Policy Optimization (PPO) and Group Relative Policy Optimization (GRPO). Crucially, they learn solely from outcome-based rewards-specifically, the correctness of the final answers-eliminating the need for labor-intensive manual annotations of memory operations.

How the Memory Manager Enhances Knowledge Retention

Following each dialogue turn, the Memory Manager extracts salient facts and cross-references them with existing memory entries. It then selects one of four actions:

- ADD: Incorporate new information absent from memory.

- UPDATE: Integrate additional details into existing memories to refine or expand them.

- DELETE: Remove outdated or conflicting data.

- NOOP: Maintain the current memory state if no changes are warranted.

For example, if a user initially mentions owning a dog named Max and later adds that they have a cat named Whiskers, a naive system might discard the first fact. Memory-R1’s Memory Manager, however, learns to update the memory to reflect both pets, preserving a comprehensive and accurate user profile.

Training is driven by the quality of answers produced by the Answer Agent using the updated memory. Positive reinforcement is given when memory edits lead to improved responses, fostering an adaptive and outcome-focused memory management strategy.

Answer Agent: Precision Through Selective Memory Distillation

When a question is posed, the Answer Agent retrieves a broad set of candidate memories but does not pass all of them directly to the LLM. Instead, it applies a learned filtering mechanism to distill this collection down to the most relevant pieces, effectively reducing noise and enhancing reasoning quality.

For instance, if asked, “Does Sarah live near the coast or the mountains?”, a standard LLM might be misled by irrelevant memories and answer incorrectly. Memory-R1’s Answer Agent filters out unrelated information, focusing on coastal-related memories, resulting in a correct and contextually grounded response.

This agent is also trained with reinforcement learning, optimizing for exact match accuracy between its answers and ground truth, which encourages effective noise reduction and context utilization.

Efficiency and Scalability in Training

Memory-R1 demonstrates remarkable data efficiency, achieving strong performance with only 152 question-answer pairs during training. This efficiency stems from its reliance on outcome-based rewards rather than exhaustive manual labeling of memory operations, enabling scalable learning from real-world, long-horizon dialogues.

The system was evaluated on the LOCOMO benchmark, which features extensive multi-turn conversations averaging 600 turns and 26,000 tokens per dialogue. This benchmark tests the ability to manage memory over long sequences and handle diverse reasoning tasks, including single-hop, multi-hop, open-domain, and temporal queries.

Performance Highlights and Comparative Analysis

Memory-R1 was benchmarked using prominent LLM architectures such as LLaMA-3.1-8B-Instruct and Qwen-2.5-7B-Instruct, compared against leading baselines including LOCOMO, Zep, A-Mem, LangMem, and Mem0. Evaluation metrics included:

- F1 Score: Measures the overlap between predicted and correct answers.

- BLEU-1: Assesses unigram lexical similarity.

- LLM-as-a-Judge: Employs an independent LLM to evaluate factual accuracy, relevance, and completeness, serving as a proxy for human judgment.

Results revealed that Memory-R1 with GRPO training outperformed the previous best baseline (Mem0) by 48% in F1, 69% in BLEU-1, and 37% in LLM-as-a-Judge scores on LLaMA-3.1-8B. Comparable improvements were observed on Qwen-2.5-7B. These gains were consistent across all question types and generalized well across different model backbones.

Significance and Future Implications

Memory-R1 represents a paradigm shift in how AI systems handle memory by demonstrating that memory management and utilization can be effectively learned rather than hardcoded. By leveraging reinforcement learning grounded in task outcomes, the framework:

- Enables dynamic consolidation of knowledge, preserving and evolving information coherently over time.

- Filters out irrelevant or noisy data, enhancing the factual accuracy and reasoning capabilities of LLMs.

- Requires minimal supervision, making it practical for deployment in real-world, large-scale dialogue systems.

- Exhibits strong generalization across different LLM architectures, paving the way for more intelligent, memory-aware AI agents.

Final Thoughts

Memory-R1 breaks the stateless barrier of traditional LLMs by teaching agents to manage long-term memory through reinforcement learning. This approach achieves state-of-the-art results with limited training data and robust generalization, marking a critical advancement toward AI that not only communicates fluently but also remembers, learns, and reasons with human-like persistence. The result is a richer, more personalized, and more effective user experience across diverse applications.

Frequently Asked Questions

Q1: What distinguishes Memory-R1 from conventional LLM memory systems?

Memory-R1 employs reinforcement learning to actively govern memory operations-deciding when to add, update, delete, or retain information-leading to smarter knowledge consolidation and less fragmentation compared to static, rule-based methods.

Q2: How does Memory-R1 enhance answer accuracy in long, complex dialogues?

The Answer Agent selectively filters retrieved memories, focusing on the most relevant information before generating responses. This “memory distillation” reduces noise and improves factual correctness over approaches that feed all retrieved data indiscriminately.

Q3: Is Memory-R1 efficient in terms of training data requirements?

Yes, it achieves significant performance improvements using only 152 question-answer pairs, thanks to its outcome-driven reinforcement learning framework that avoids the need for detailed manual annotations of memory edits.