Nous Research has unveiled Hermes 4, a suite of open-weight AI models available in 14B, 70B, and 405B parameter configurations, all built upon Llama 3.1 checkpoints. This new generation achieves cutting-edge performance exclusively through advanced post-training strategies. A standout feature of Hermes 4 is its innovative hybrid reasoning capability, allowing the models to seamlessly switch between straightforward answers and detailed, step-by-step reasoning by using <think>...</think> tags when tackling complex queries.

What sets Hermes 4 apart is its ability to deliver state-of-the-art results among open-weight models while adhering to a fully transparent development process and a neutral alignment philosophy. This demonstrates that sophisticated reasoning skills can be cultivated entirely through open-source frameworks without proprietary constraints.

DataForge: Pioneering Graph-Driven Synthetic Data Creation

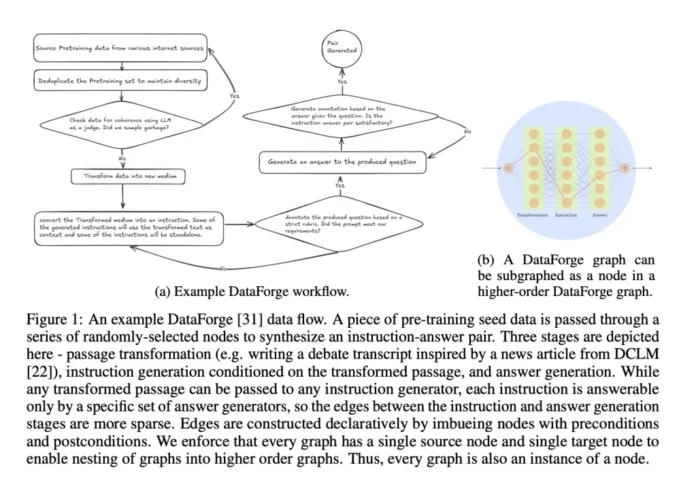

At the heart of Hermes 4 lies DataForge, a groundbreaking system for generating synthetic training data using graph-based methodologies. Unlike conventional data curation, DataForge employs a directed acyclic graph (DAG) structure where each node corresponds to an action defined by the Planning Domain Definition Language (PDDL). These nodes specify conditions and transformations, enabling the automated assembly of intricate data generation pipelines.

Starting with seed datasets from DCLM and FineWeb, DataForge can, for example, convert a Wikipedia article into a jazz poem and subsequently produce instruction-answer pairs derived from this creative transformation. This process yields roughly 5 million samples encompassing 19 billion tokens, with reasoning samples deliberately designed to be token-dense-averaging five times the length of non-reasoning samples-to support extended thought processes up to 16,000 tokens.

Scaling Quality with Extensive Rejection Sampling

Hermes 4 integrates Atropos, an open-source reinforcement learning platform developed by Nous Research, to conduct rejection sampling across nearly 1,000 specialized task verifiers. This comprehensive verification framework ensures the selection of high-quality reasoning paths spanning multiple domains.

Key verification modules include:

- Answer Format Training: Rewards adherence to over 150 distinct output formats.

- Instruction Compliance: Utilizes RLVR-IFEval tasks with intricate constraints to ensure precise instruction following.

- Schema Validation: Employs Pydantic models to verify JSON output conformity.

- Tool Utilization Training: Encourages agentic behavior through tool use scenarios.

This rigorous rejection sampling produces a vast dataset of validated reasoning trajectories, often featuring multiple unique solution routes to the same verified conclusion. This strategy promotes the learning of robust reasoning patterns rather than rote memorization of fixed solutions.

Innovative Length Regulation to Prevent Excessive Reasoning

One of the most notable breakthroughs in Hermes 4 addresses the challenge of overextended reasoning chains, where models generate unnecessarily long thought sequences without proper termination. Analysis revealed that the 14B variant hit its maximum context length 60% of the time on the LiveCodeBench benchmark when operating in reasoning mode.

The team devised a highly effective two-step supervised fine-tuning method to teach the model to halt reasoning precisely at 30,000 tokens:

- Produce reasoning traces using the current model policy.

- Insert

</think>tokens exactly at the 30,000-token mark. - Train the model exclusively on the termination decision, ignoring the reasoning content.

- Apply gradient updates solely to the

</think>and<eos>tokens.

This targeted approach led to dramatic improvements, reducing overlong generations by 78.4% on AIME’24, 65.3% on AIME’25, and 79.8% on LiveCodeBench, with only a minor accuracy trade-off ranging from 4.7% to 12.7%. By concentrating learning on the termination signal, the model avoids collapse and effectively learns to “count” its reasoning steps.

Benchmark Excellence and Commitment to Neutrality

Hermes 4 sets new benchmarks for open-weight models, with the 405B version achieving impressive scores such as 96.3% on MATH-500 (reasoning mode), 81.9% on AIME’24, 78.1% on AIME’25, 70.5% on GPQA Diamond, and 61.3% on LiveCodeBench.

Its performance on RefusalBench is particularly remarkable, scoring 57.1% in reasoning mode-the highest among all tested models and significantly surpassing GPT-4o’s 17.67% and Claude Sonnet 4’s 17%. This highlights Hermes 4’s balanced approach to engaging with sensitive or controversial topics while maintaining appropriate boundaries, reflecting Nous Research’s dedication to neutral alignment.

Technical Infrastructure and Training Methodology

Training Hermes 4 involved a customized TorchTitan framework running on 192 NVIDIA B200 GPUs. The system efficiently manages highly variable sample lengths through advanced packing techniques, achieving over 99.9% batch utilization, alongside flex attention mechanisms and selective loss masking that restricts cross-entropy loss calculations to assistant-generated tokens.

The training regimen follows a cosine learning rate schedule with 300 warmup steps and a total of 9,000 steps, operating at a 16,384-token context window and a global batch size of 384 samples. It combines Data Parallelism, Tensor Parallelism, and Fully Sharded Data Parallelism to optimize resource use and scalability.

Conclusion: A Milestone in Open-Source AI Reasoning

Hermes 4 represents a major leap forward in open-source artificial intelligence, proving that top-tier reasoning abilities can be developed transparently and reproducibly without reliance on proprietary datasets or closed development pipelines. By integrating innovative graph-based synthetic data generation, large-scale rejection sampling, and sophisticated length control techniques, Nous Research has crafted models that rival leading proprietary systems in performance while upholding neutral alignment and user steerability. These qualities position Hermes 4 as a genuinely versatile and responsible AI tool rather than a restrictive assistant.