Differential privacy (DP) remains the premier framework for safeguarding individual data in expansive machine learning and data analysis endeavors. A pivotal challenge within this domain is partition selection-the technique of securely extracting the maximum number of distinct elements from vast collections of user-generated data (such as search queries or tokenized documents) while rigorously upholding privacy standards. Researchers from MIT and Google AI Research have developed innovative algorithms for differentially private partition selection, aiming to optimize the count of unique items retrieved from combined datasets without compromising user-level differential privacy guarantees.

Understanding the Challenge of Partition Selection in Differential Privacy

At its essence, partition selection addresses the question: How can we disclose the greatest variety of unique data points from a dataset without exposing any individual’s private information? Items exclusive to a single user must remain confidential; only those supported by a sufficient number of users can be safely published. This problem is fundamental to numerous applications, including:

- Extracting private vocabularies and n-grams for natural language processing.

- Analyzing categorical datasets and constructing histograms.

- Learning privacy-preserving embeddings from user-contributed data.

- Conducting anonymized statistical queries for search engines and databases.

Conventional Methods and Their Drawbacks

The standard approach, widely implemented in tools like PyDP and Google’s differential privacy libraries, typically follows a three-step process:

- Scoring: Assigning each item a weight, often based on its frequency across users, with strict limits on individual user contributions.

- Noise Injection: Adding randomized noise-commonly Gaussian-to each item’s weight to obscure exact user activity.

- Threshold Filtering: Releasing only those items whose noisy weights exceed a threshold determined by privacy parameters (ε, δ).

While this method is straightforward and highly scalable-compatible with distributed computing frameworks like Hadoop, Spark, and MapReduce-it suffers from inefficiencies. Popular items accumulate surplus weight that does not enhance privacy, whereas less frequent but valuable items often fail to surpass the threshold due to lack of redistributed weight.

Introducing Adaptive Weighting: The MaxAdaptiveDegree (MAD) Algorithm

To overcome these limitations, Google’s team has introduced MaxAdaptiveDegree (MAD), the first adaptive and parallelizable partition selection algorithm, along with its multi-round variant, MAD2R, tailored for datasets containing hundreds of billions of entries.

Innovative Features and Technical Highlights

- Dynamic Weight Redistribution: MAD detects items with weights significantly exceeding the privacy threshold and reallocates the surplus weight to underrepresented items. This adaptive weighting strategy enhances the likelihood of revealing rare but shareable items, thereby maximizing the utility of the output.

- Preserved Privacy Standards: The reweighting process maintains the same sensitivity and noise requirements as traditional uniform weighting, ensuring strict user-level (ε, δ)-differential privacy under the central DP framework.

- Efficient Scalability: Both MAD and MAD2R operate with linear computational complexity relative to dataset size and require only a fixed number of parallel processing rounds, making them ideal for large-scale distributed systems without the need for in-memory data storage.

- Multi-Round Enhancement (MAD2R): By dividing the privacy budget across multiple rounds and leveraging noisy weights from earlier rounds to guide subsequent weighting, MAD2R further improves performance, especially in datasets with long-tailed distributions common in real-world scenarios.

Algorithmic Workflow of MAD

- Uniform Initial Weighting: Each user assigns a uniform initial score to their items, ensuring bounded sensitivity.

- Truncation and Redistribution: Items exceeding an adaptive threshold have their excess weight trimmed and proportionally rerouted back to the contributing users, who then redistribute it among their other items.

- Final Weight Calibration: Additional uniform weight is added to correct minor allocation inaccuracies.

- Noise Addition and Selection: Gaussian noise is applied, and items surpassing the noisy threshold are selected for output.

In MAD2R, outputs and noisy weights from the first round inform the weighting focus in the second round, enabling biasing that enhances utility without compromising privacy.

Empirical Validation: Superior Performance Across Diverse Datasets

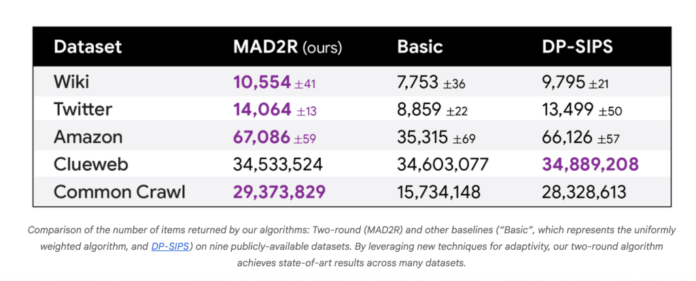

Comprehensive testing on nine datasets-including Reddit, IMDb, Wikipedia, Twitter, Amazon, and the massive Common Crawl dataset with nearly one trillion entries-demonstrates:

- MAD2R consistently outperforms existing parallel baselines (such as Basic and DP-SIPS) on seven out of nine datasets in terms of unique items released under fixed privacy constraints.

- On the Common Crawl dataset, MAD2R extracted 16.6 million unique items from 1.8 billion (approximately 0.9%), while covering 99.9% of users and 97% of all user-item pairs, showcasing exceptional practical utility alongside robust privacy.

- For smaller datasets, MAD approaches the effectiveness of sequential, non-scalable algorithms, and for large-scale data, it excels in both speed and output quality.

Illustrative Scenario: Bridging the Utility Gap

Imagine a dataset containing a “heavy hitter” item shared by many users alongside numerous “lightweight” items with limited user support. Traditional DP methods tend to allocate excessive weight to the heavy hitter, neglecting the lighter items that fail to meet the threshold. MAD’s adaptive reallocation boosts the chances of these lighter items being selected, resulting in up to a 10% increase in unique items discovered compared to conventional techniques.

Conclusion: Advancing Differential Privacy with Adaptive Partition Selection

By integrating adaptive weighting with a parallelizable design, this research significantly elevates the scalability and effectiveness of differentially private partition selection. These advancements empower data scientists and engineers to extract richer insights from private datasets without sacrificing individual privacy, marking a substantial leap forward in privacy-preserving data analysis.